This is an updated version of a previous post.

Includes all areas: Donor, patient, marker testing, component preparation

This is an updated version of a previous post.

This is updated version of a previous post.

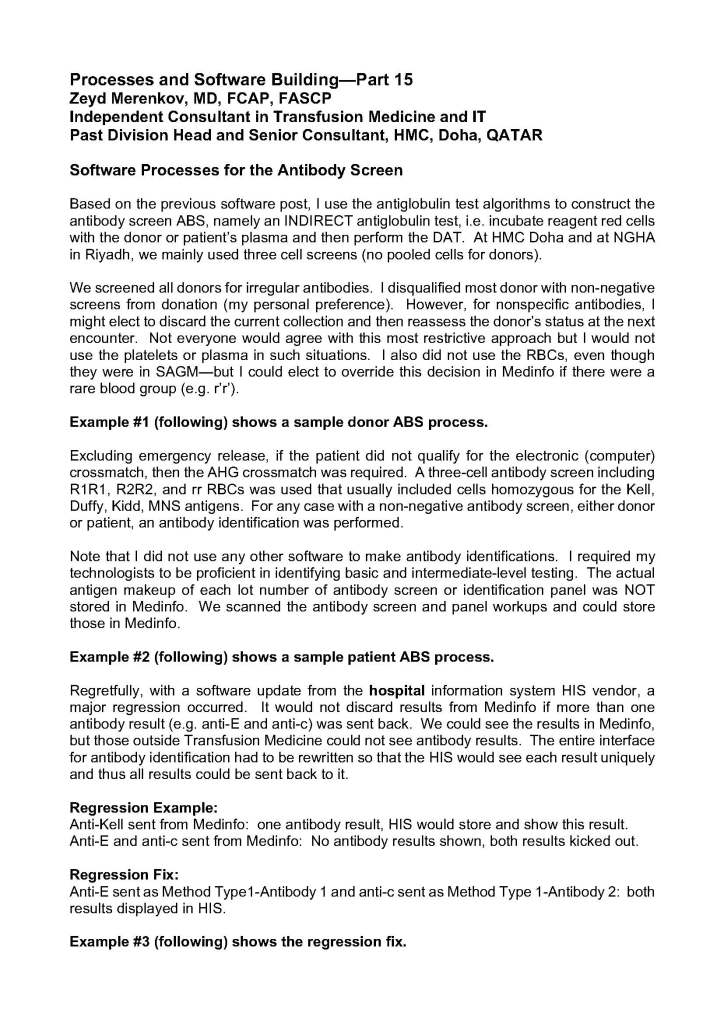

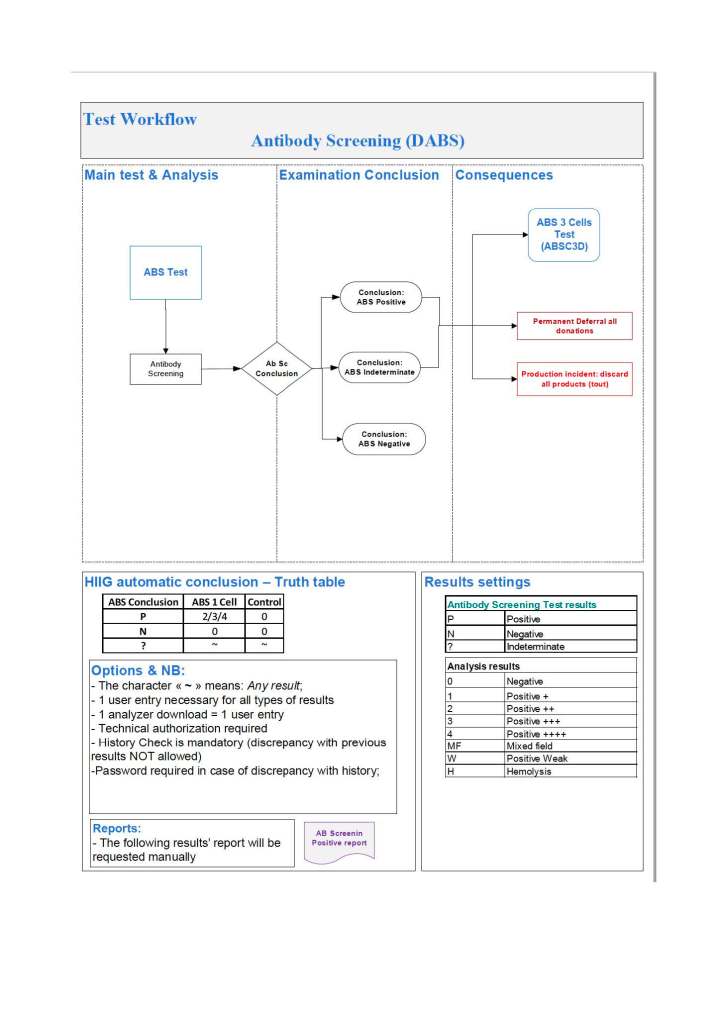

This post is mainly on building processes for a non-turnkey system such as the Medinfo Hematos IIG software that I have worked with in several countries, but there will be a few words about turnkey systems for general laboratories.

This has been a collaborative effort between the software vendor’s engineers, my Super Users, and myself. This pluralistic approach has been most productive.

A turnkey system has pretty much already defined most of the basic processes—those have been specifically approved by a regulatory agency such as US FDA. There is little customization except formatting screen and reports. Instrument interfaces are also mainly predefined. This requires much less thought and planning than a custom-built system designed on the sites actual workflows, but it can be an exercise of putting a round peg in a square hole. You don’t always get what you want or need.

In the locations where I collaborated in setting up the Medinfo Hematos IIG program, we did not follow US FDA but mainly the Council of Europe CE standards since these were much more customizable. We could modify and add additional criteria specific to our country and region (e.g. rules for donor qualification for local pathogens). This has always been my preferred approach. Also, the USA does not use the full ISBT specification for its labels.

Start with a frame of reference (CE) and then try to optimize it for our local needs. Unfortunately for blood banking, FDA has many fewer approved options than other regions, including in the preparation of blood components, e.g. prohibiting the use of pooled buffy coat platelets, lack of automated blood component production such as Reveos, and use of world-class pathogen-inactivation technologies such as Mirasol.

If you invested the time to make a detailed workflow across all processes and tests, much of this can be readily translated into the software processes, but first you must study the flows and determine where you can optimize them. This requires that you study the options in the new software to see what you can use best.

I always liked Occam’s Razor, i.e. “ntia non sunt multiplicanda praeter necessitatem,”—the simpler the better as long as it meets your needs. If the manual processes are working well and can be translated into the new system, do so. If they need changes for optimization, then do so only if necessary.

Most of my career has been spent overseas with staff from many different countries and backgrounds, most of whom were not native in English. The wording of the processes is very important. Think of the additional obstacle of working with a complicated software in your non-mother tongue! Also consider the differences between American English, British English, and international English. I always made the Super Users read my proposed specifications and then asked them to repeat what I wrote/said. There were many surprises!

I think of the Aesop’s fable about the mother who gave birth to an ugly baby looking like a monkey. Still, to the mother her baby was the most beautiful baby and she entered him into a beauty contest. In other words, to the mother her child is perfect!

It is most important to use the manufacturer’s recommendations to build tests and for the special automated processing and pathogen-inactivation processes. For example, we had multiple ABO and D typing tests—they did not necessarily agree on what were acceptable results for automated release of results. The same is true for many other tests.

Example: One method for Rh(D) typing stated that only results in {0, 2+, 3+, 4+} were acceptable—all other results required manual review and/or additional testing. Another only accepted results in {0,3,4}. Thus we had to build separate D typing processes for each methodology.

Another consideration is whether to offer all the processes globally or restricted to one site. I favor allowing access to all methodologies at all sites—in case of a disaster where tests had to performed at another site. This means that if you send an order over an interface from the hospital system to the blood bank system, then at the receiving (blood bank) end, you would choose which methodology to use, i.e. it is not a one-to-one mapping but rather a one to many mapping.

If we changed equipment at one site to that used at another site, we didn’t have to modify our software to accommodate this. Even if you didn’t have the equipment or reagents at one site, you could always build it into the system and not activate the settings until needed.

Finally, the issue of middleware. Many instruments offer this, but one faces the problem about support and regression errors when you either update the middleware software or the blood bank computer software. Medinfo itself could serve as the middleware so there was less chance of errors when updating the software. In fact, I never used any middleware when using Medinfo.

This is a revised version of a previous post.

As much as 90% of the RBC component allocation can be performed without an actual crossmatch test (AHG or immediate-spin) provided that certain criteria are met.

Enforcing these rules, however, can be cumbersome unless one has blood bank software that verifies that each rule is met. In the Medinfo patient module, the transfusion history database is checked automatically. If the rules are met, then Medinfo allows the selection (allocation) of RBC units without performing a crossmatch test. Otherwise, it will check to see if the AHG crossmatch has been done within the past 3 days. If not, it will prompt for new crossmatch testing with a new specimen. If the situation is urgent, one can go to Emergency Mode and release components without the crossmatch.

Principle:

In selected patient categories, no classical crossmatch may be required for release of RBC components. The criteria are specified here as applicable in my build of the Medinfo Hematos IIG computer system HIIG.

Policy:

References:

This is an update of a previous post.

I have been involved with planning for several plasma fractionation projects in the Middle East.

Many clients expressed the interest in using local plasma to make plasma derivatives (e.g. factor concentrates, intravenous gamma globulin, albumin), feeling that local plasma was safer than using imported plasma. Some of these are in short supply in the world market so the only way to ensure their uninterrupted availability is to consider to manufacture them for local consumption.

Still, the major issue today is that it is difficult for any country in the region to collect enough plasma to make such a project feasible. When I first considered such planning, we were looking for as much as 250,000 raw liters of plasma annually. Since then, there are newer technologies that allow much smaller batches to be cost-effective. Alternatively, one could charge higher prices for using smaller batches from local plasma.

Still, it is likely that plasma must be imported to sustain a plant. There are different regulations for plasma donor qualification country-to-country. Many of these jurisdictions may do less screening and testing than is done for normal blood and apheresis donors. Other countries use their blood donors with the same requirement for both commercial plasma and blood donations.

In this era of emerging infectious diseases, I personally favor using the stringent blood donor criteria—same as routine collections. It is not what we know, but the unknown pathogens that are potentially the most dangerous.

In addition to building a fractionation plant, one must train staff for this highly technical operation. This may require developing a special curriculum to prepare students for these jobs.

To export the plasma to certain regions, one may have to use plasma quarantine. In this protocol, plasma is held or quarantined until the next donation is collected and passes screening. This requires a robust blood bank production software such as Medinfo to track serial donations.

There are other processes to consider: how to develop a transport network to keep plasma frozen at minus 80C viable in a region that reaches very high ambient temperatures.

I would recommend a graded approach to develop such an industry. First I would negotiate a plasma self-sufficiency arrangement. We would collect local plasma in the country and export it to a manufacturing plant in another country and the derivatives would be returned to us. This may require inspection by the accreditation agency of the processing country to allow importation of the raw plasma for manufacture.

Since it is unlikely any one country has enough plasma for manufacture, recruiting neighboring countries to participate in a manufacturing plant is important. Technology for such a plant is complex so establishing a joint venture with one of the plasma industry companies is essential. Some manufacturers are very keen to develop extra capacity since there is a world-wide shortage of plasma fractionation and are even willing to help obtain external plasma sources for such a plant.

Such a plant is an excellent way to develop local talent to run such a plant, including training of local staff to be the industrial engineers in the plasma fractionation process. It would take approximately two years of training to prepare engineers on-site at a plasma fractionation site if they have studied the necessary science and mathematics subjects.

Such a program would take several years of planning and development. Some of the major steps needed include:

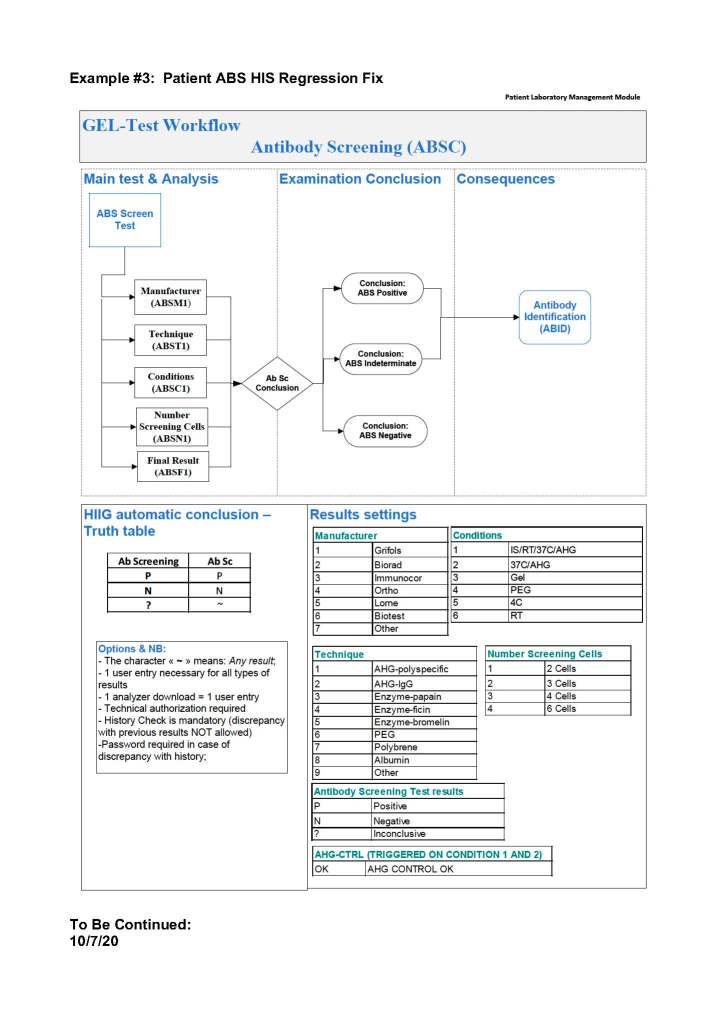

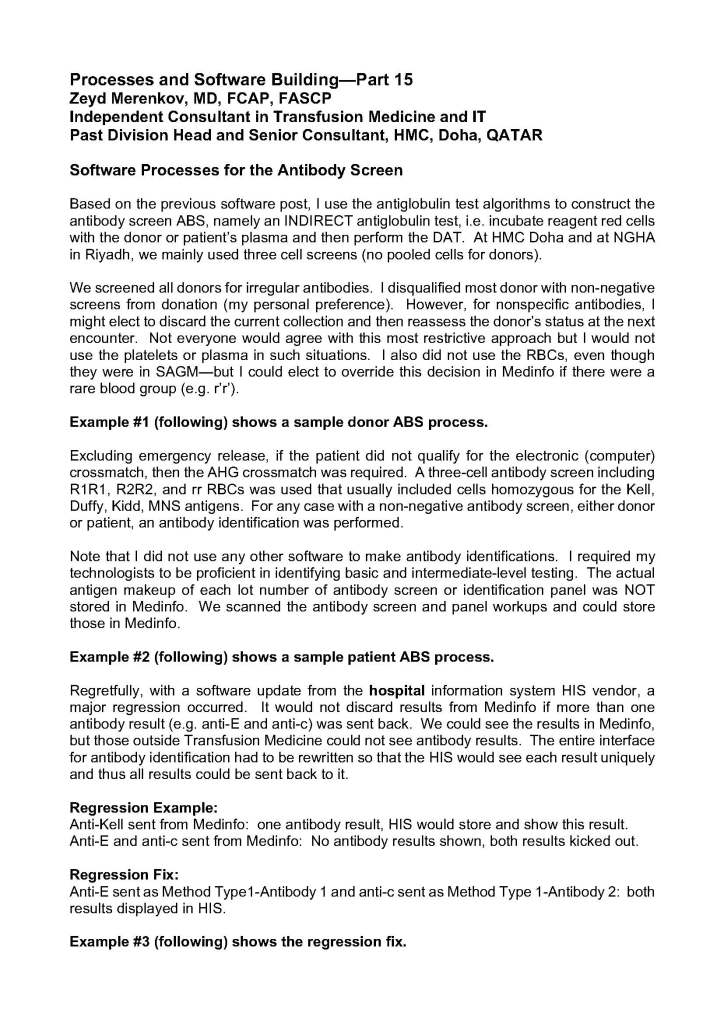

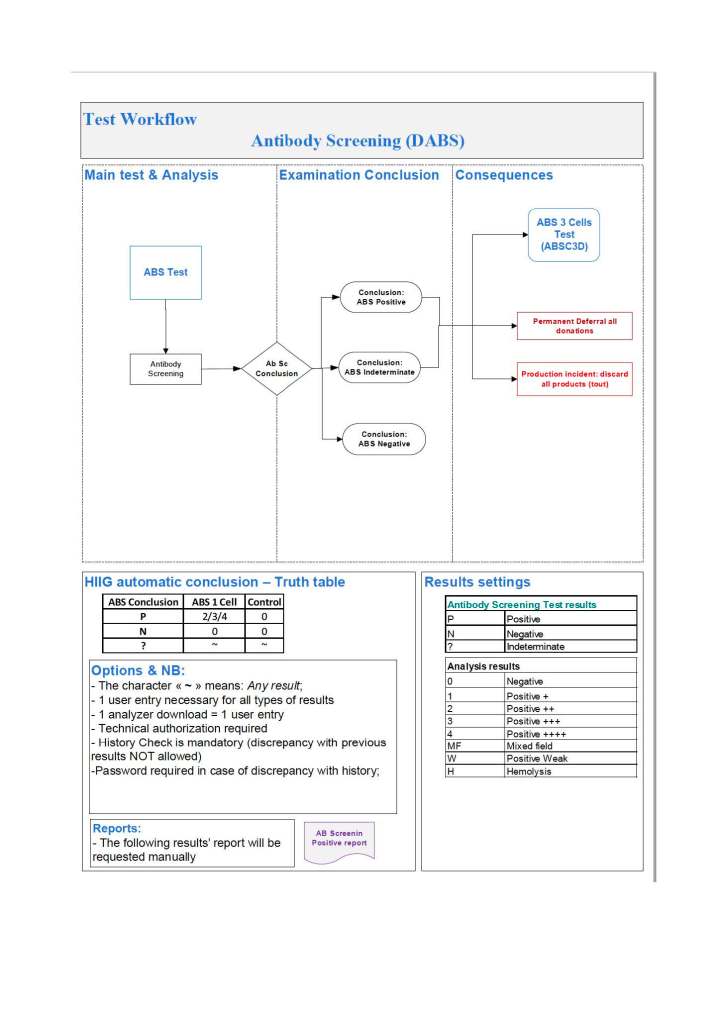

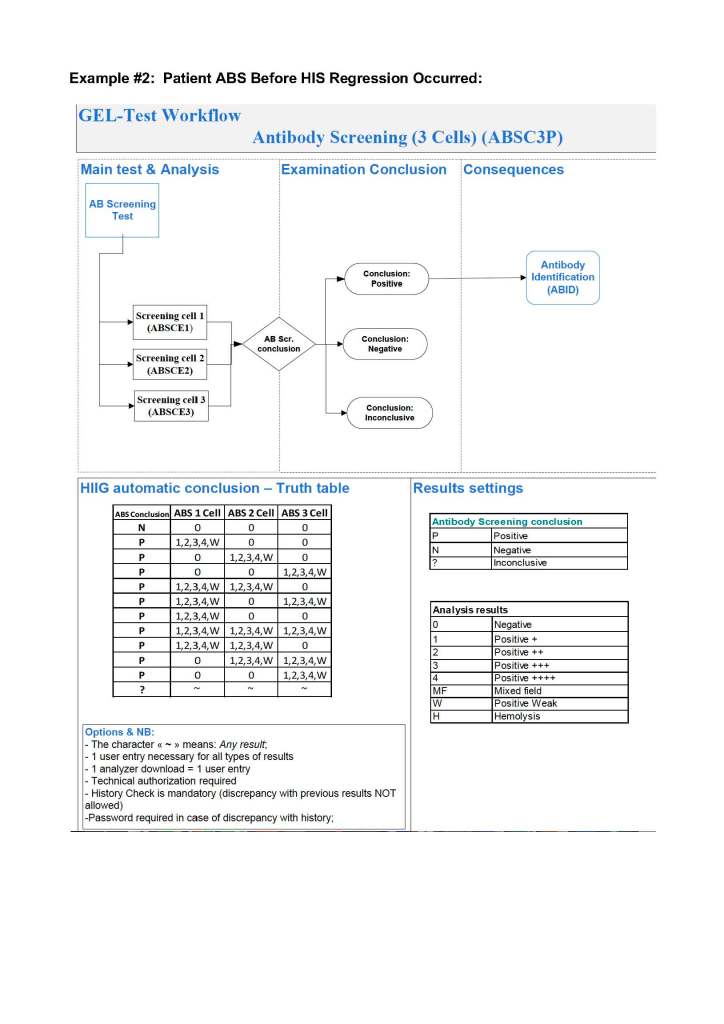

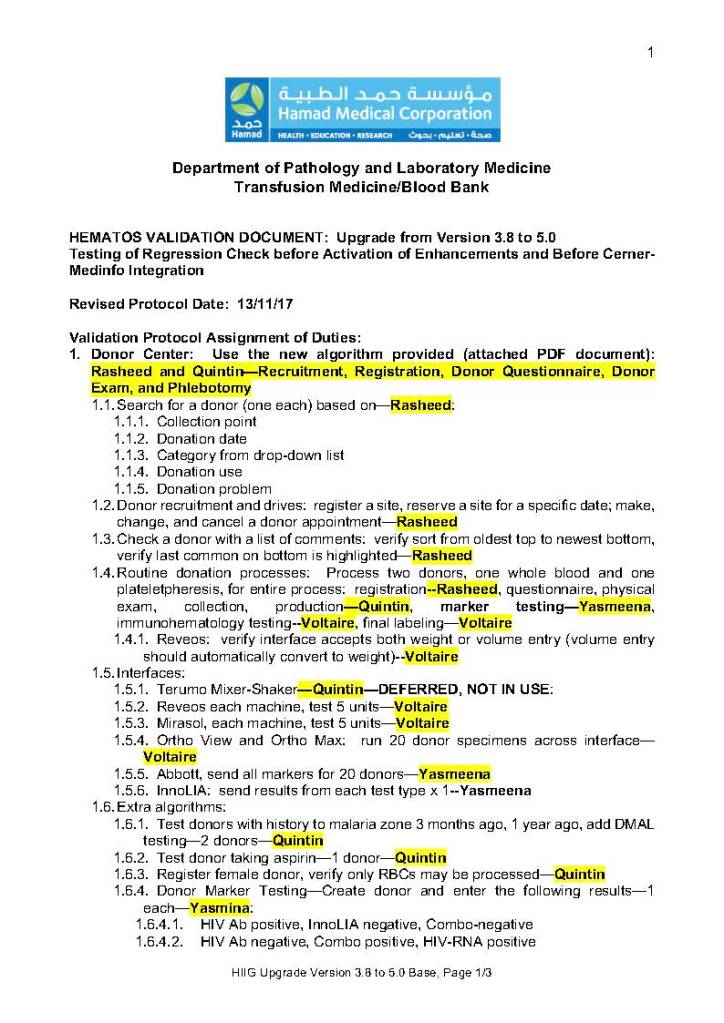

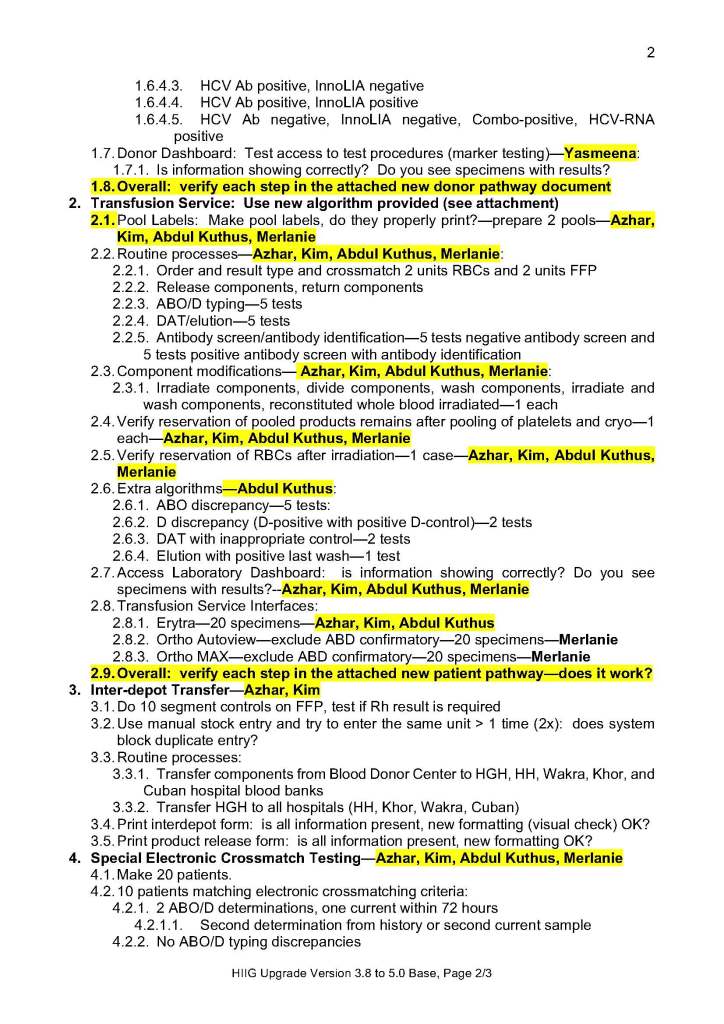

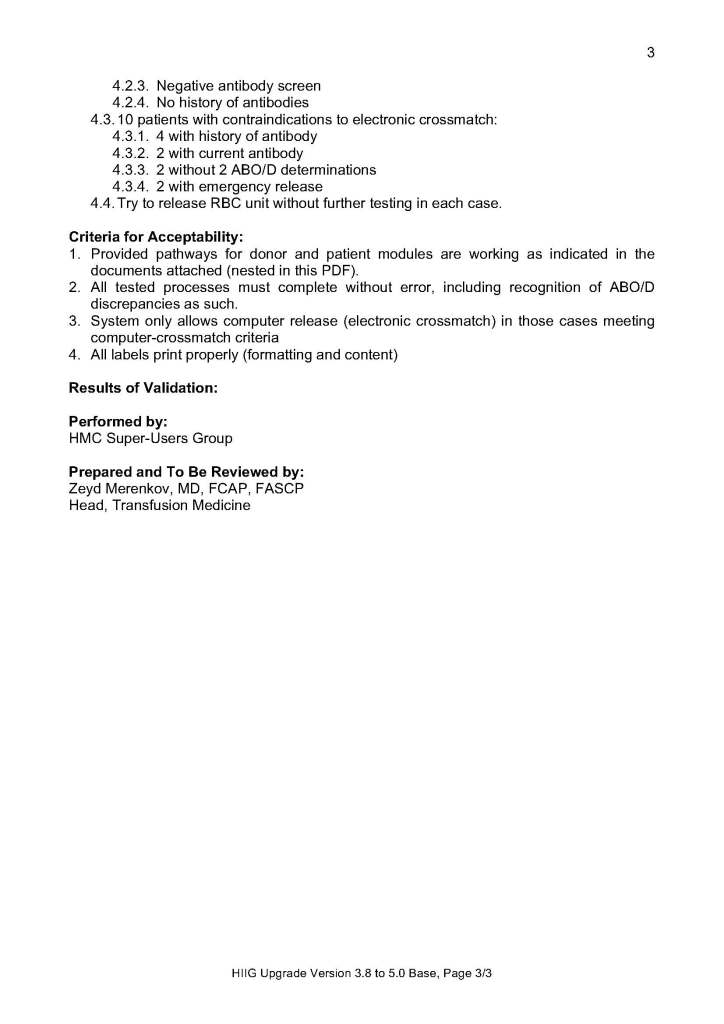

When a new software version was introduced in my system, first the vendor did a preliminary round of testing before submitting it to my Medinfo-Laboratory Information System team for validation. I then prepared a training session for the Super Users and assigned them validation tasks. When the validation was completed and accepted by me, then the software was submitted to the Hospital Information System HIS department, which conducted its own final acceptance training. Following this, transfusion medicine staff were trained before the new software went live.

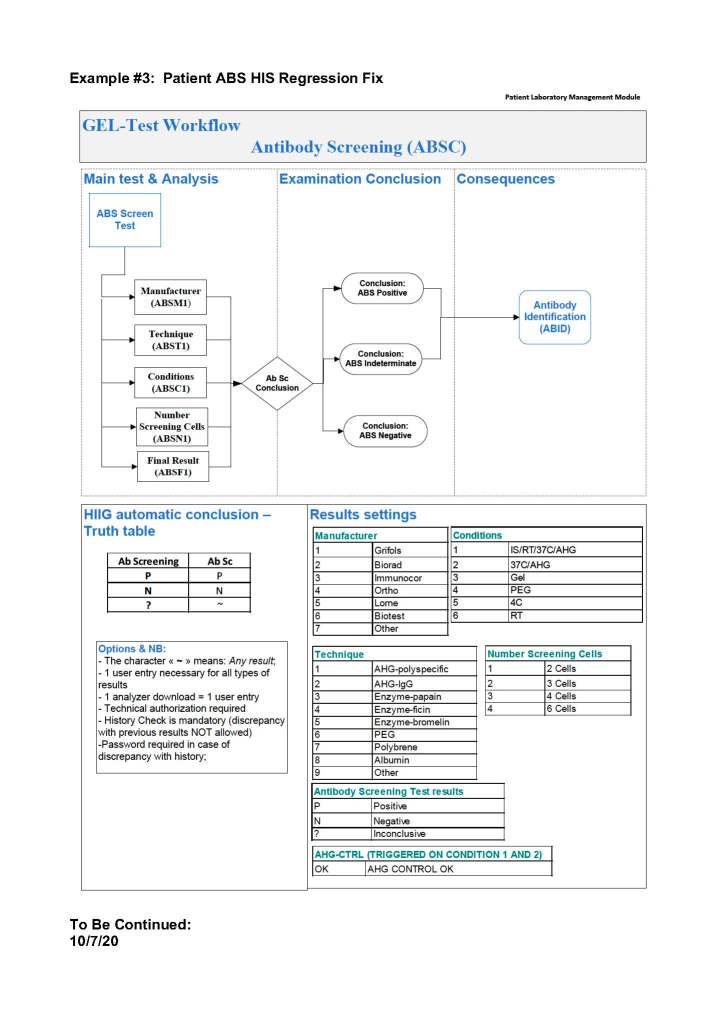

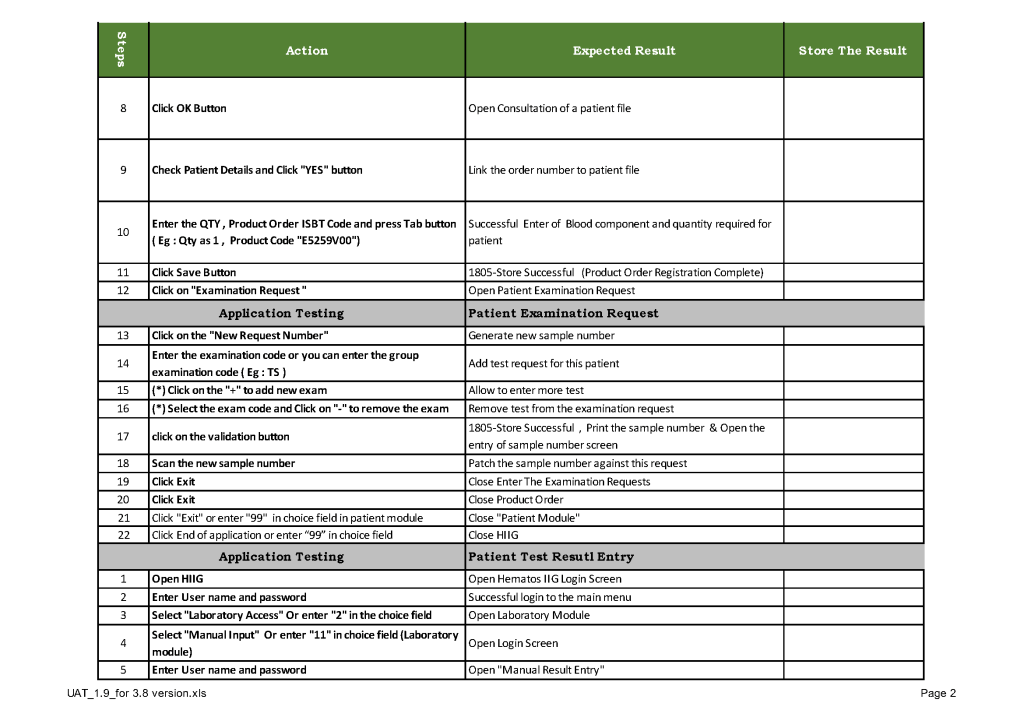

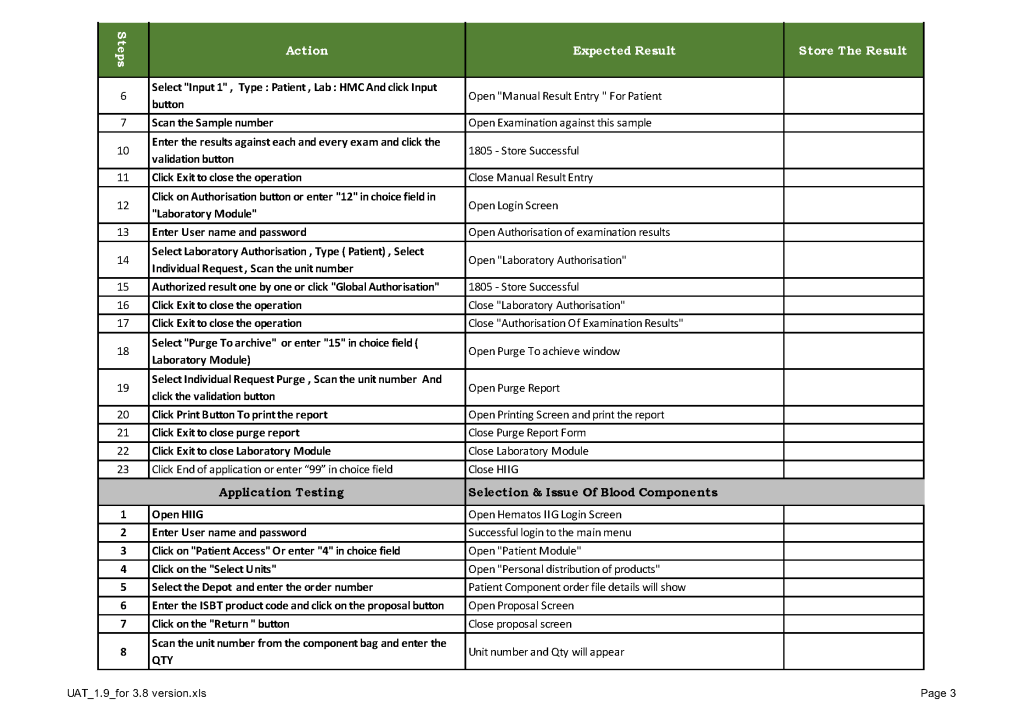

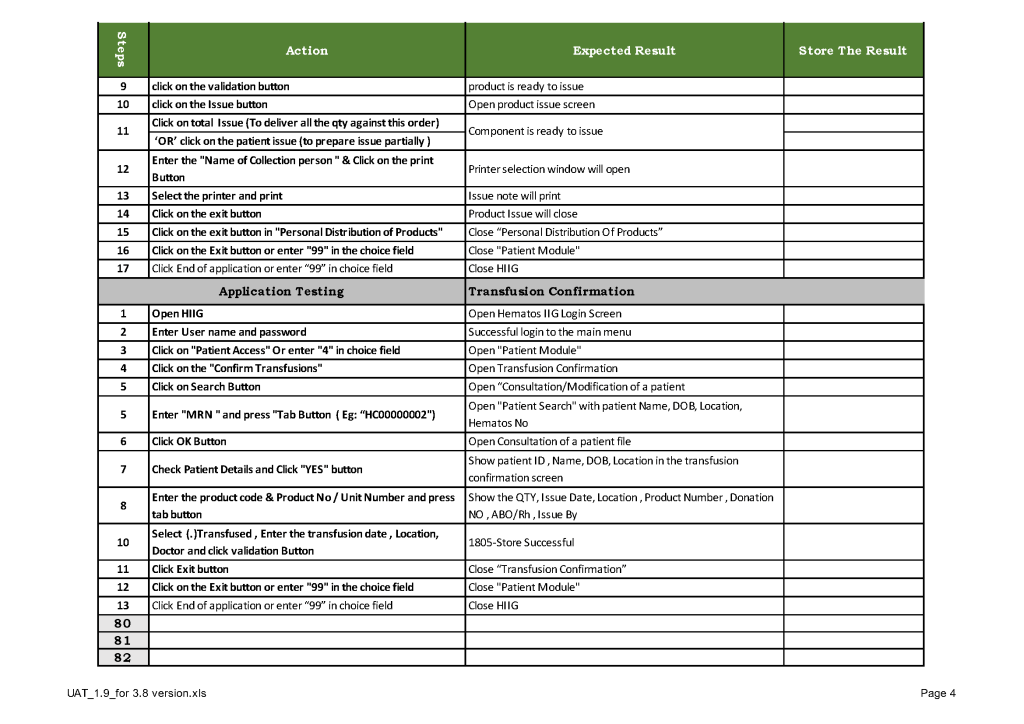

This is a sample user acceptance training document, which was prepared by Medinfo and myself and submitted to HIS for the upgrade from Version 3.8 to 5.0. The patient module is shown.

The Medinfo Super Users performed the script while the HIS Quality Team viewed the actual output. Notice for each Action step there is the Expected Result.

The evaluation for each step was recorded as part of this long and wide spreadsheet:

This is a continuation of yesterday’s post.

The following is a sample validation performed by the Super Users for the upgrade of the blood bank software Medinfo Hematos IIS from version 3.8 to 5.0:

It is critical to engage the technical, medical , and (blood bank) nursing staff in this process, That is why it is so important to identify a core of computer-literate users to help with the building and testing/validation.

I don’t mean finding staff who can already program or code. Rather, I mean staff that are astute with knowing their work processes and who had good skills with Microsoft Office and Windows or equivalent. I did not expect them to understand database structure or use structured query language. They were chosen for their ability to learn quickly and their meticulousness.

For our blood bank system, I chose computer-literate technical staff to be involved in the build from the very beginning. They learned how to test each module and to some degree support it. These became my Super-Users and to this day support the system for many tasks. These staff served as the system administrators and worked directly with me as the Division Head for Laboratory Information Systems. They were not full-time and still had their other clinical/technical duties. They liaised with the software vendors engineers.

Our blood bank system was NOT a turnkey system. It was custom designed according to our workflows. There were NO default settings!! We had to be remember, ‘Be careful what you ask for, you might get it!’ In some countries, approved systems are turnkey and may allow only few changes to the core structure and thus may not be this optimized for the needed workflow; often only cosmetic changes are permitted.

When we built our first dedicated blood bank computer system, the company would take a module and completely map out the current processes collaboratively with me. After this, I analyzed the critical control points and started to map out the improved computer processes that would take over. After that we would build that those processes in the software and test it. If it failed, we would correct it and test again…and again if necessary. Fortunately, the blood bank vendor did not charge us when we made mistakes.

Sadly, another vendor (non-blood bank), only gave limited opportunities to make settings. If wrong, there might be additional charges to make corrections. This other vendor really pushed the client to accept the default settings regardless whether or not they actually fit. End-users were selected to make and approve the settings, but they were only minimally trained on how to make the settings. It was a journey of the end-users being led to the slaughter—and being blamed for their settings when they accepted the vendor’s recommendations—they usually selected the defaults. There wasn’t enough time for trial and error and correction.

The blood bank system Super Users were an important part of our process. They were an integral part of the implement team and could propose workflows, changes, etc.—subject to my approval. They learned the system from the start and developed invaluable skills that allowed them to support the system after the build. Also, they could serve to validate the system according to the protocols I prepared. Moreover, I took responsibilities for their activities and they were not left out to hang.

Every hospital blood bank location and the blood donor center had Super-Users. These included:

The cost of using these staff? They were paid overtime and were relieved of other duties when working on Super User duties. This was much cheaper than hiring outside consultants who may or may not know our system well enough to perform these tasks.

By having a Super User at each site, I in effect had an immediate local contact person for troubleshooting problems who could work with the technical/nursing staff. We did not rely on the corporate IT department for support and worked directly with the software vendor. Response time was excellent this way.

Processes and Software Building—Part Three

This is an updated version of a previous post.

Using the current state to build a new work flow can be a difficult task and balancing act. If one changes it too much, it may be difficult for the staff to cope. If too little, then why bother at all? Still, we had to take the time to analyze our current system and identify areas of improvement. When building a new computer system, we didn’t want to capture our current system with its flaws in concrete. Buying a new system is costly and it would be very hard to change it again, This had to be done right.

Also, whether or not you have a pre-existing software may affect your choices. In my opinion, it is easier to learn something new than to make some changes to a system that everyone has already learned. Learning is easier than unlearning and relearning.

First, I studied the new system’s capabilities and took note of the features I would like to adopt to improve the current processes. I did this especially at the critical control points. I also studied our incident reports: where had there been nonconformances? How could I change things for the better, i.e. with increased safety and compliance to international standards?

I did not want to throw out a successful manual system, just to optimize it. I tried to pick out those manual processes that worked and build those into the new workflows. What I wanted was a system recognizable and familiar to the staff but with enhancements with the least amount of change to reach our goals.

Although the vendor did some initial testing, this was insufficient to accept the system. I didn’t want the vendor to just show me some scenarios that they concocted. I was always suspicious why the vendor chose these examples and not others. Could it be that the other processes did not work as desired? I always insisted that I give the vendor representative scenarios and have them show me how the system reacted.

It is daunting task to know what settings to make. At one of my previous institutions, the administration recognized that they needed additional expertise from someone experienced in the new system. They hired an outside firm in addition to the software vendor. Still, even this was not sufficient to make the proper settings and testing. We had to rely on ourselves!

Ultimately, the Laboratory had to thoroughly test the system, The only way to do this was to use our own resources. Only we could test its actual functionality to the proper degree to ensure safety. Still, where could we get the resources to do this? Outside consultants were very expensive, especially if they have to live on-site for extended periods. The only answer was to make use of our internal resources, i.e. our staff.

In old days of only polyclonal reagents, QC of reagents took significant time. This has been simplified and easier since the introduction of monoclonal cocktail reagents.

Controls may be explicit (a specific control provided by the manufacturer) or implicit (implied by at least one negative reaction in the well of a gel card).

In order to properly interpret test results:

Make certain test results meet the criteria for interpretation. Do not accept negative results for IAT typing if DAT is strongly positive (blocking antibody).

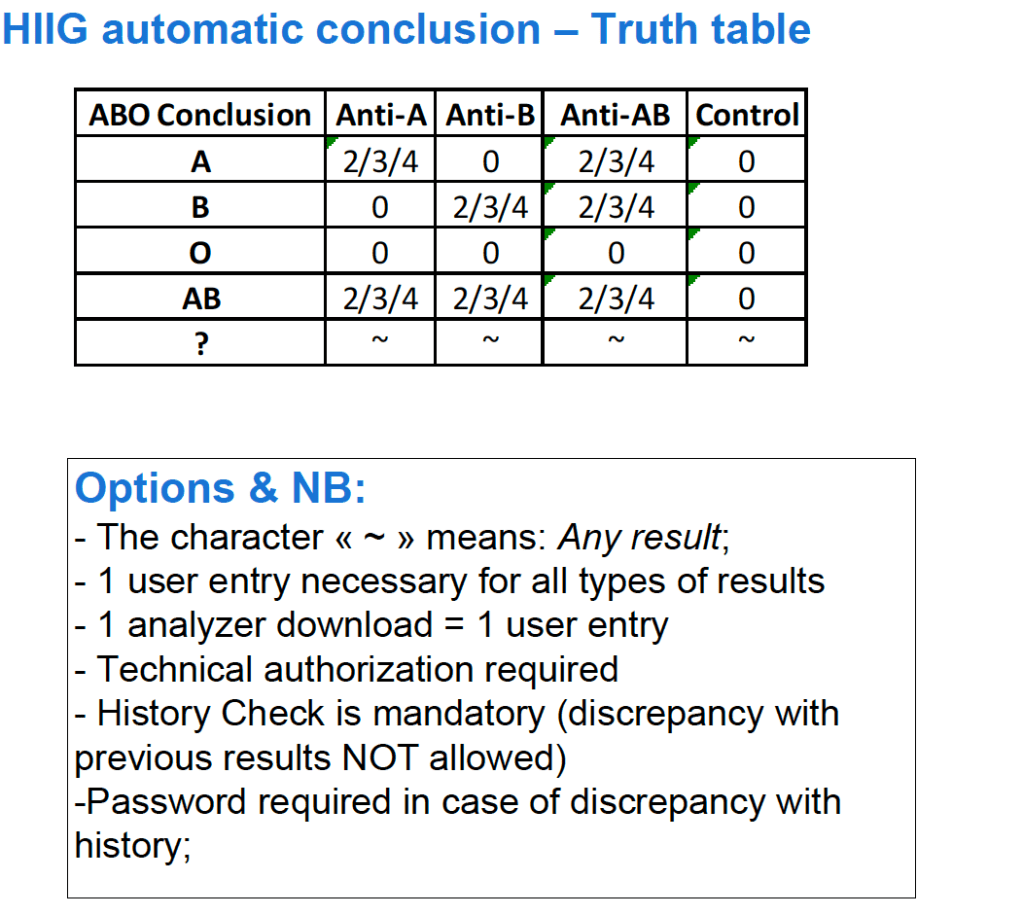

For both manual and automated tests, you can build the control criteria directly into your blood bank computer system’s truth table of results. This way the system will enforce the criteria and prevent false interpretations:

Example of control for ABO typing: