The following post is based on my experience 2011-2020 at HMC through 16/4/20:

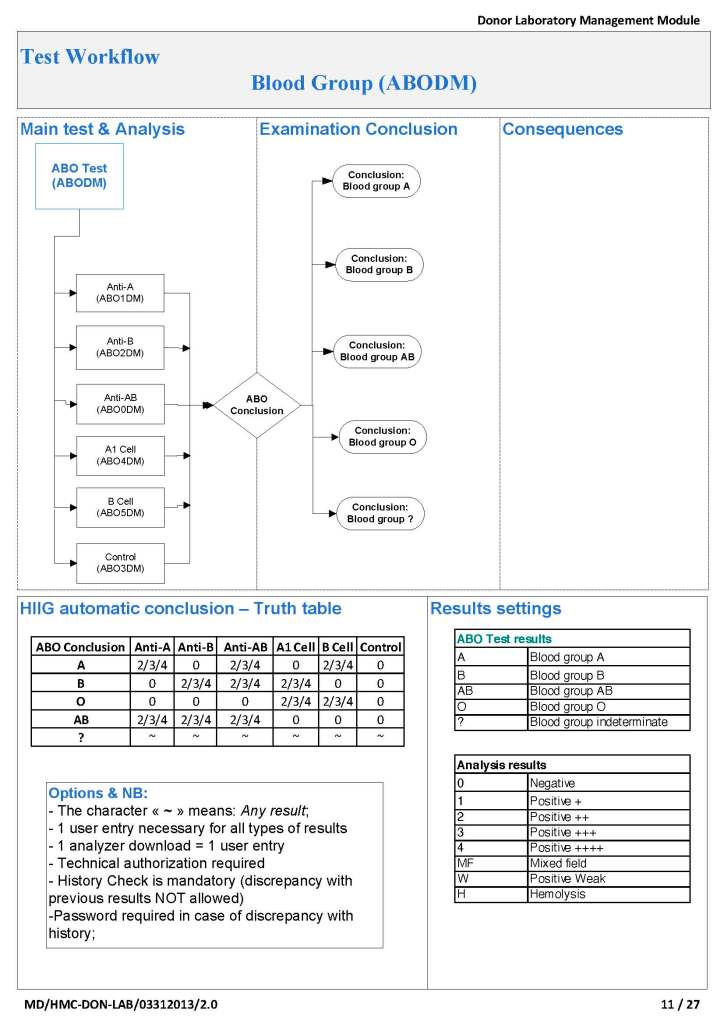

I have had many posts about the Blood Donor Center from registration, collection, processing, testing, and dispatch (inter-depot transfer). The hospital transfusion service or hospital blood bank continues the process on selection of the appropriate blood component for the patient. Specifically, it:

- Verifies the ABO/D type of RBCs and ABO type of plasma components received

- Physically examines each unit checking for leaks, labelling errors, etc.

- Receives into stock the various components

- Performs basic type and screen (group and save) testing of the patient including ABO/D type and antibody screen

- Identifies antibodies if the antibody screen is non-negative

- Performs direct antiglobulin test and elution if positive

- Modifies components (thawing, aliquoting, irradiating, washing, pooling)—although in some sites, these latter functions may be performed in the Blood Donor Center

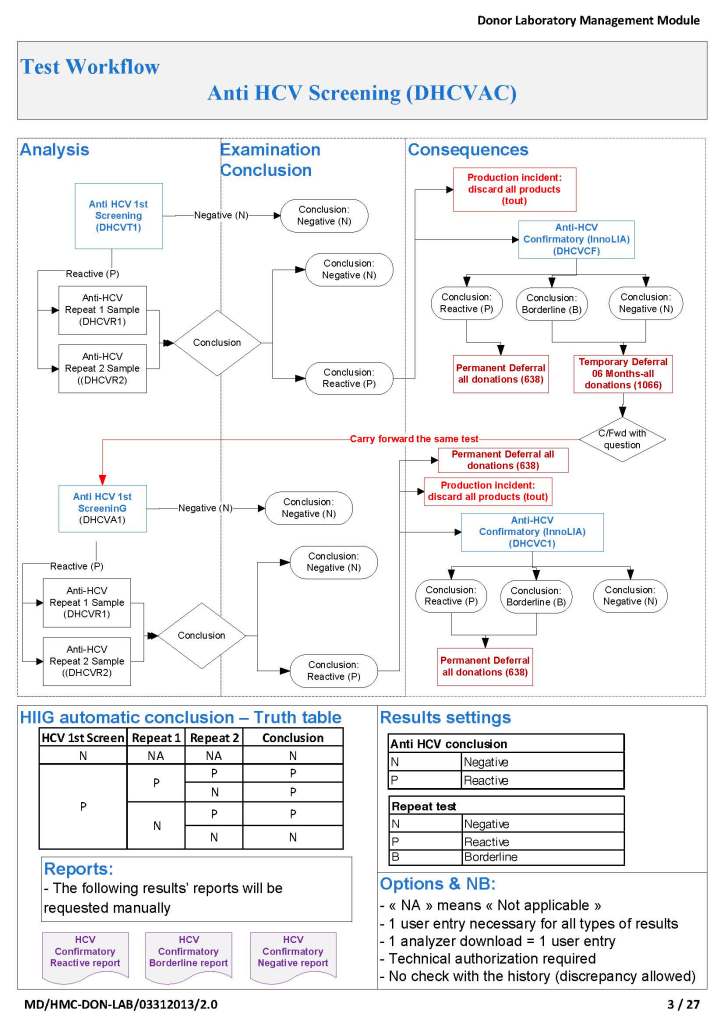

- Performs compatibility testing and selects the appropriate method (electronic, immediate-spin, antiglobulin phase crossmatch)

- Releases blood components to outside staff (nurses, doctors, etc. as allowed by the local authority)

- Investigates transfusion reactions

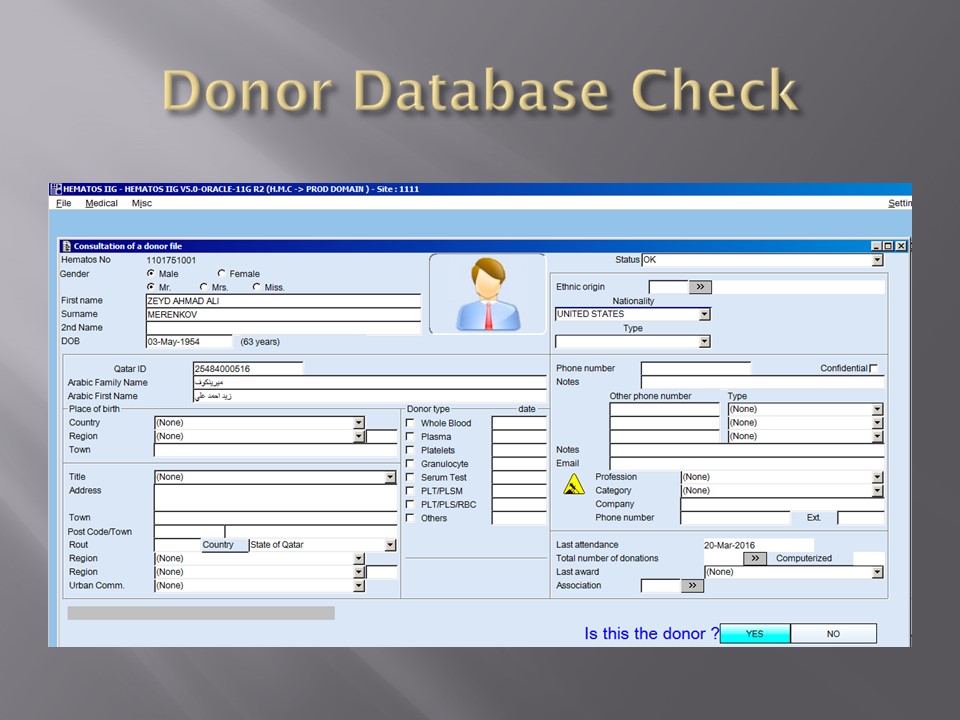

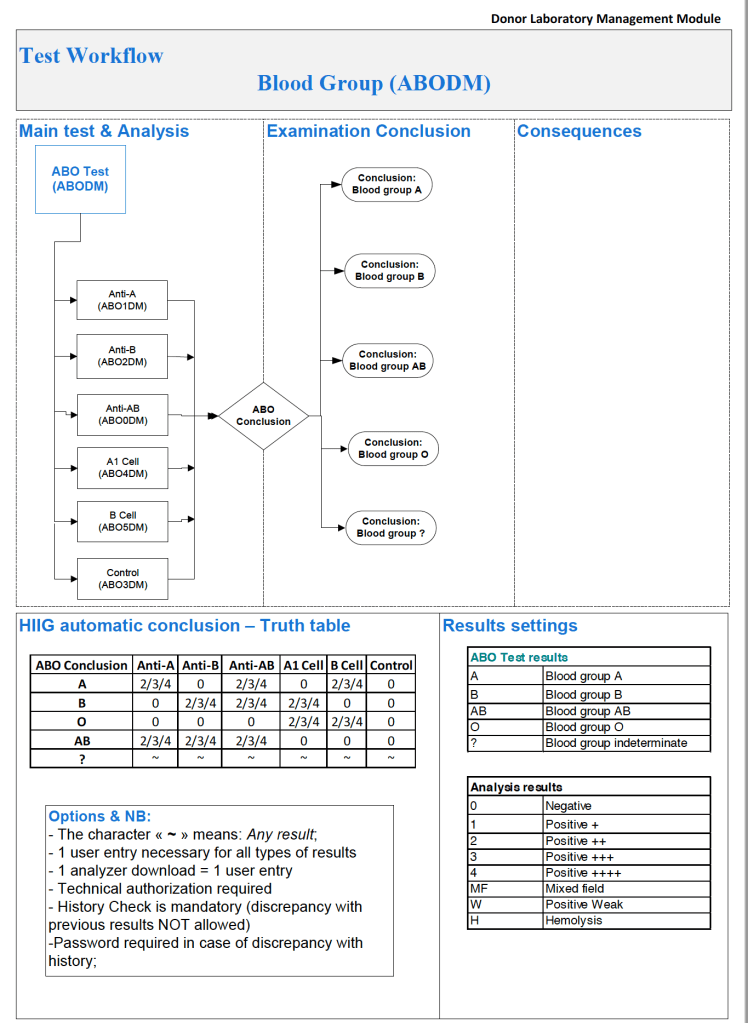

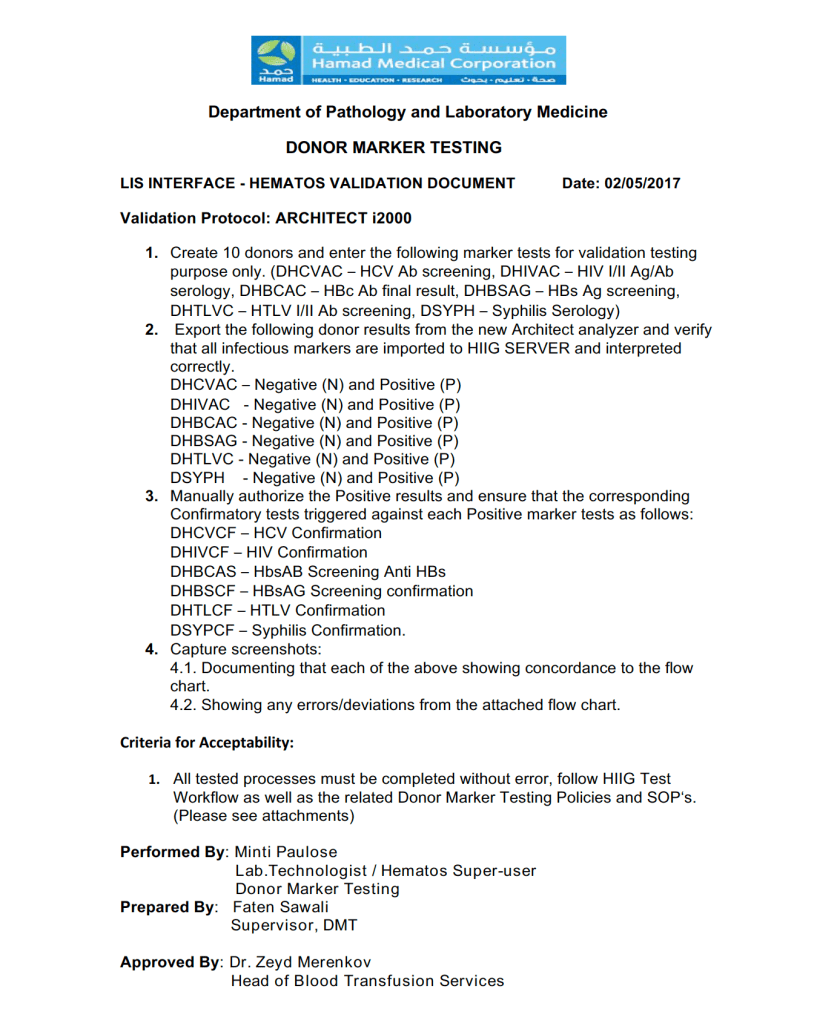

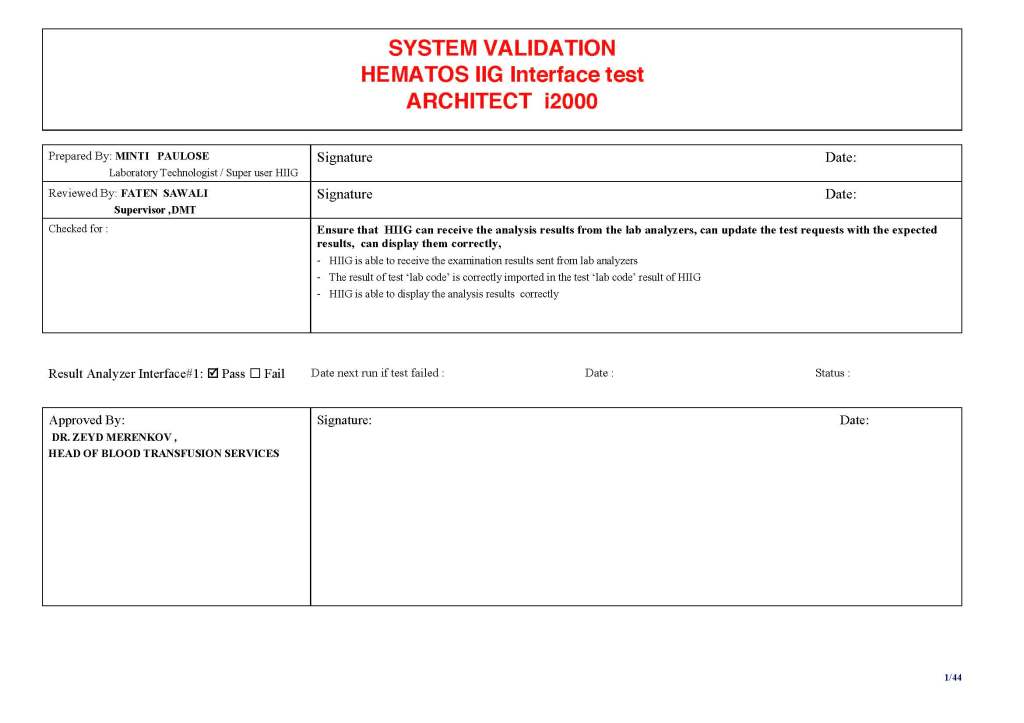

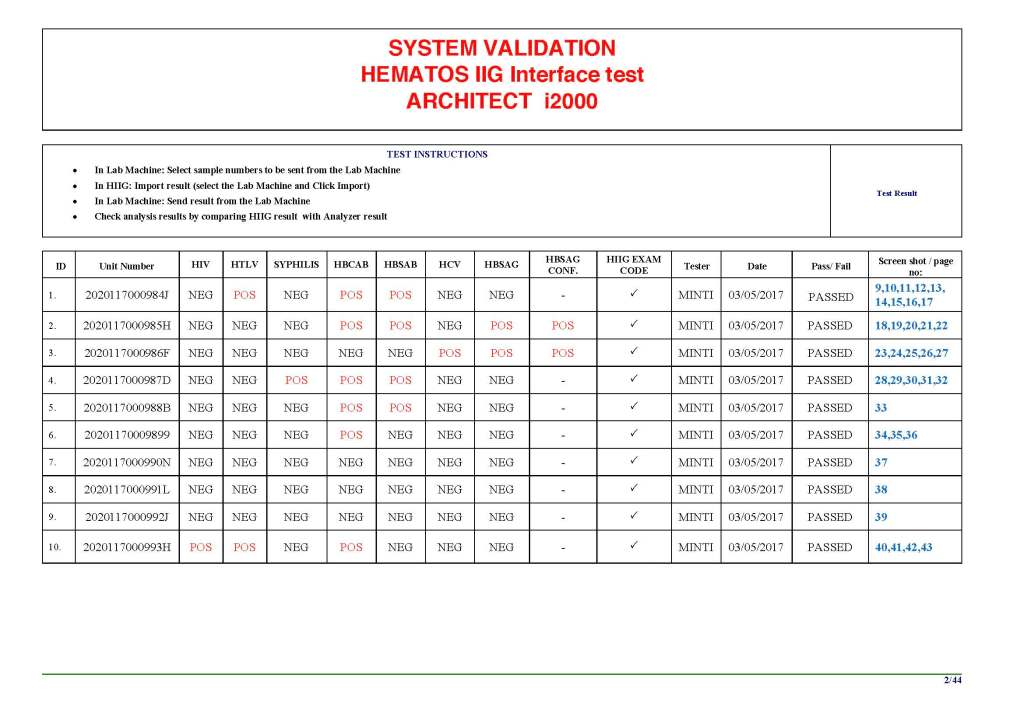

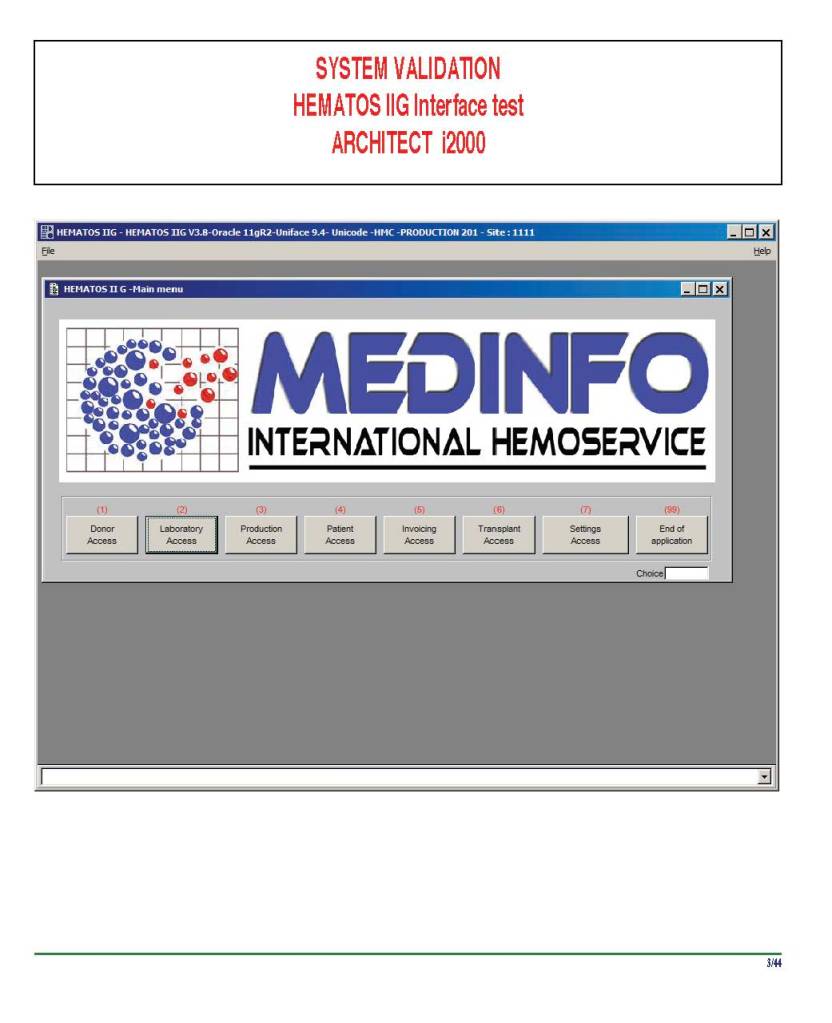

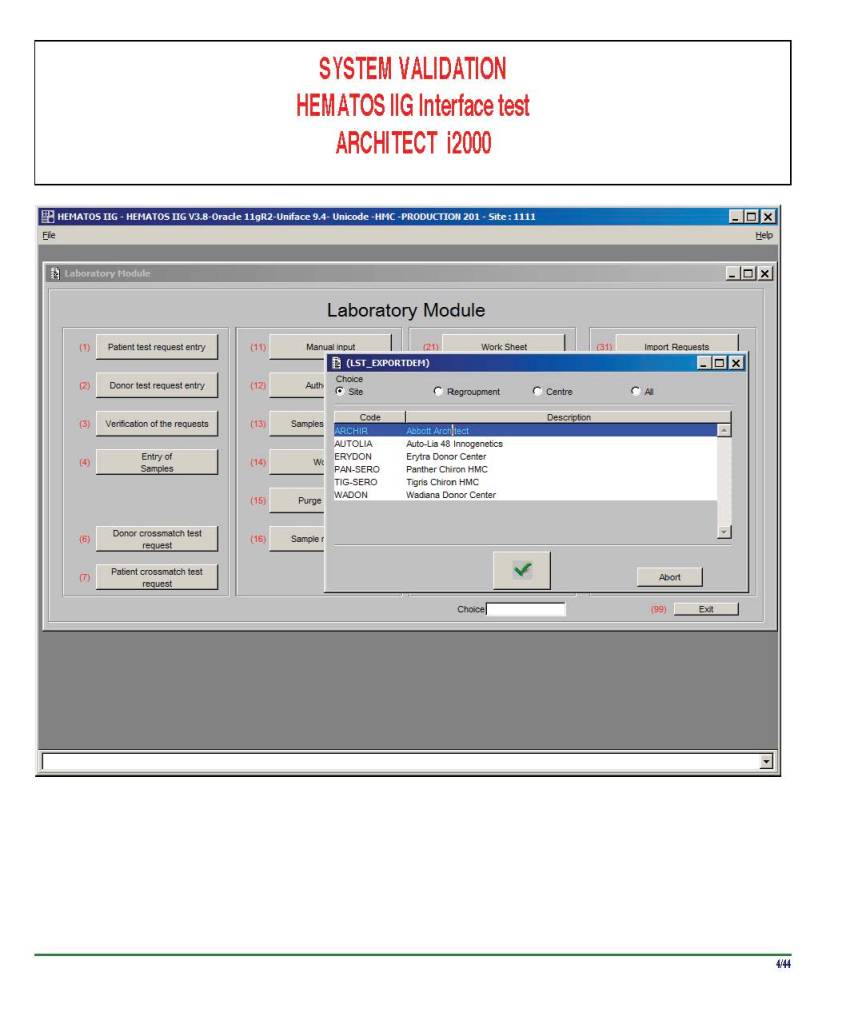

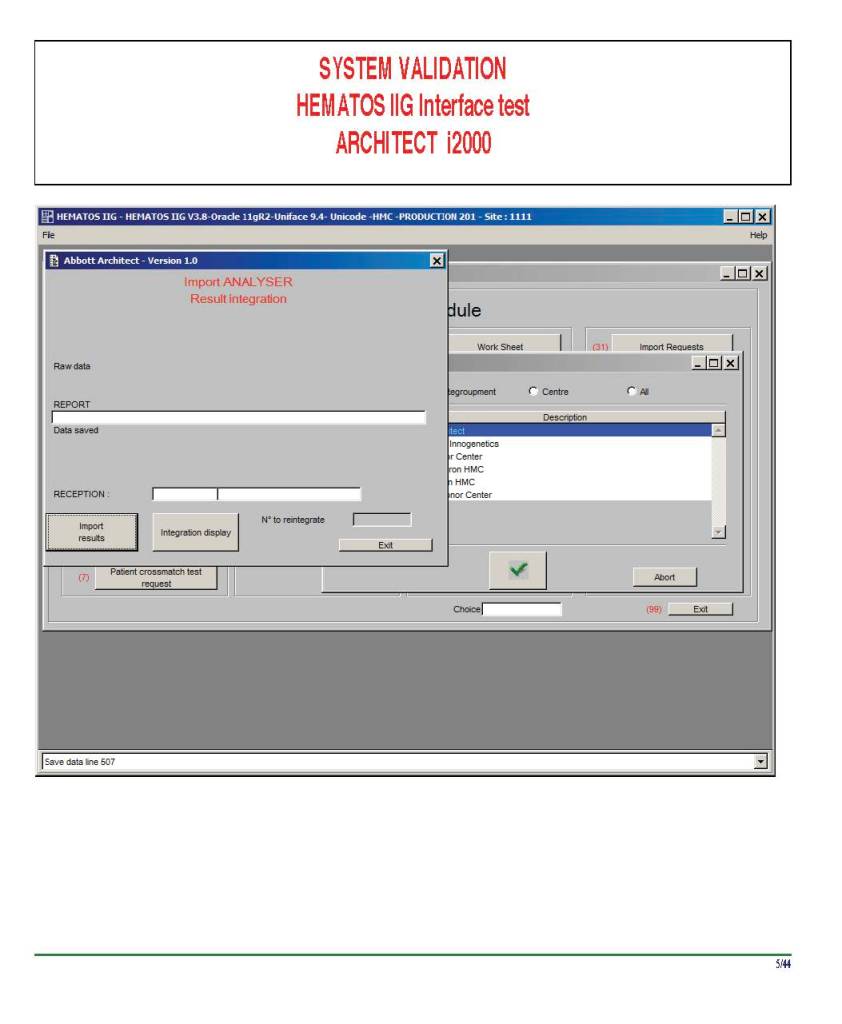

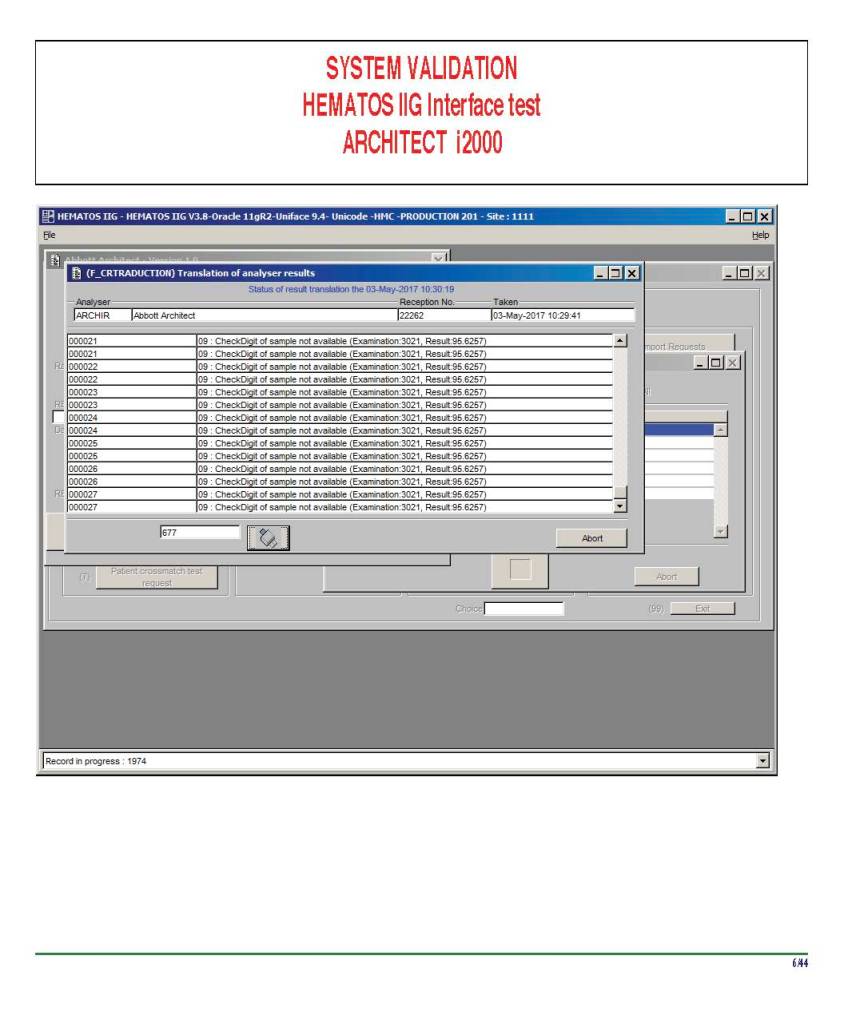

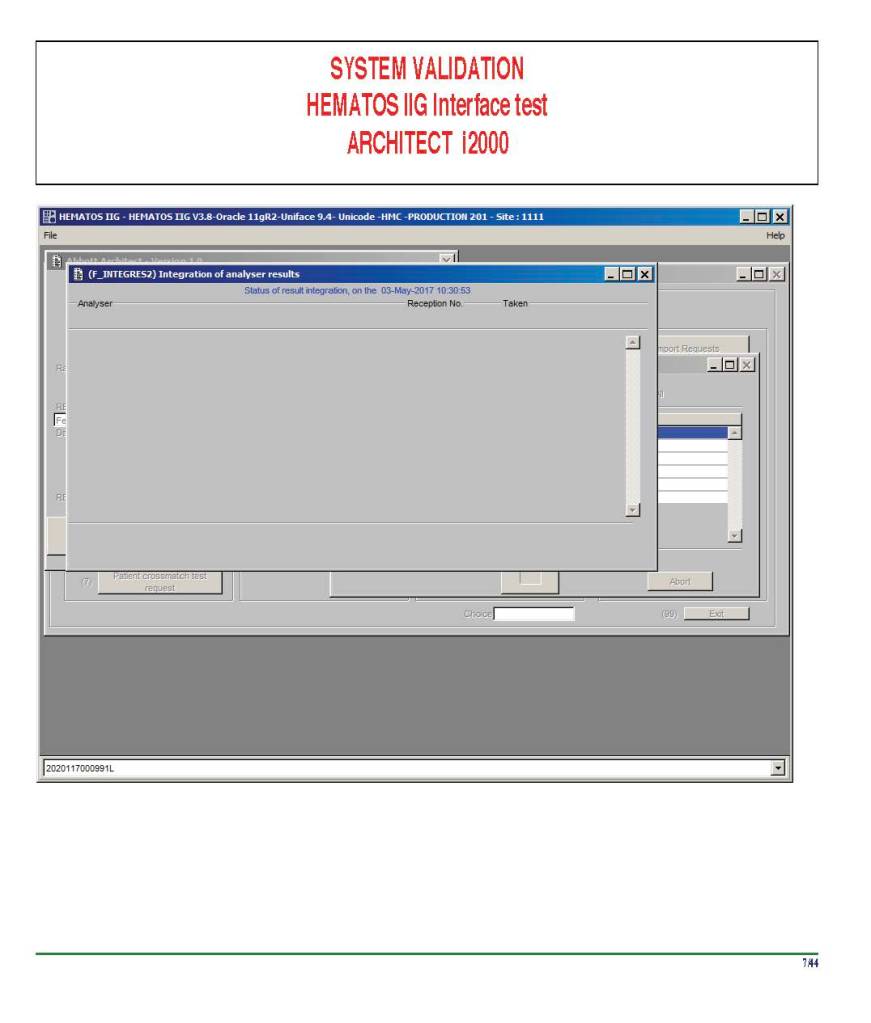

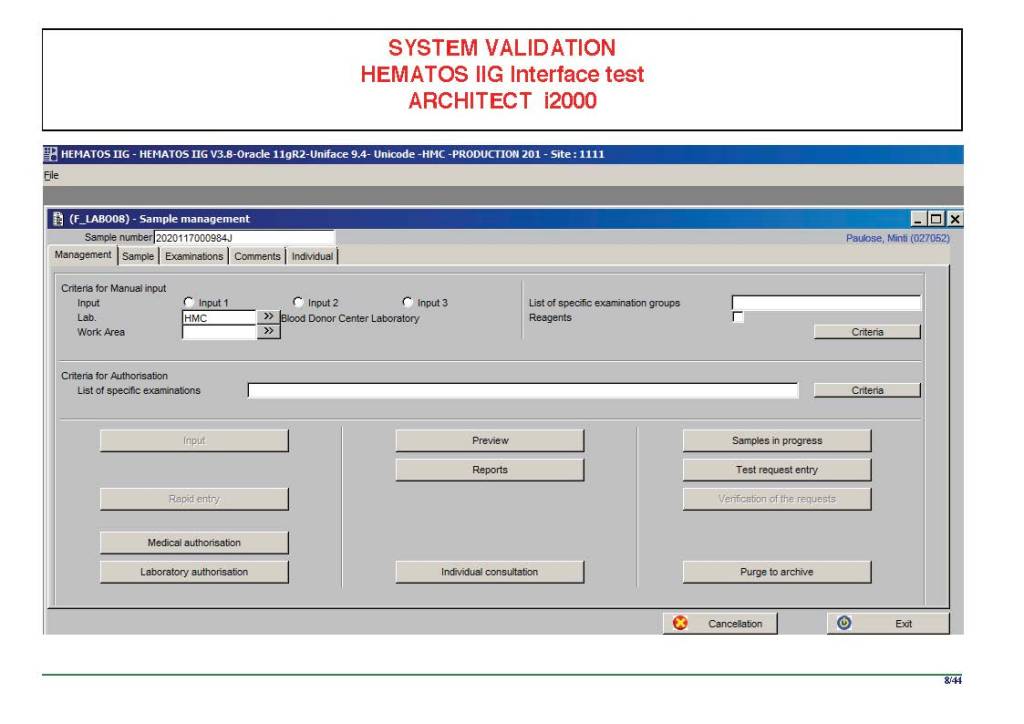

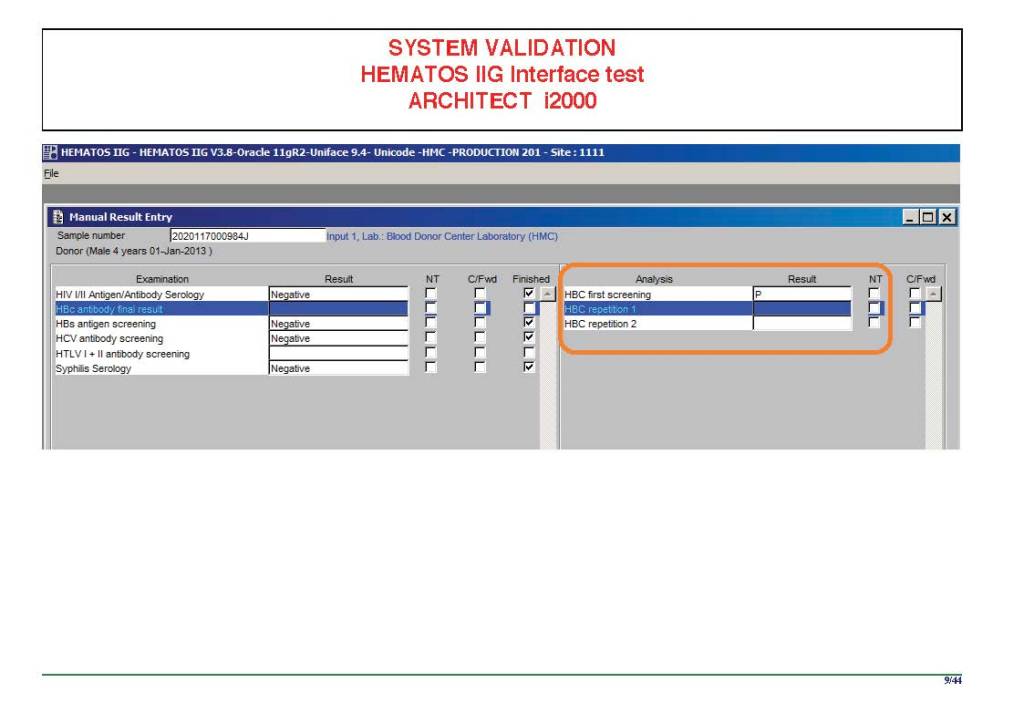

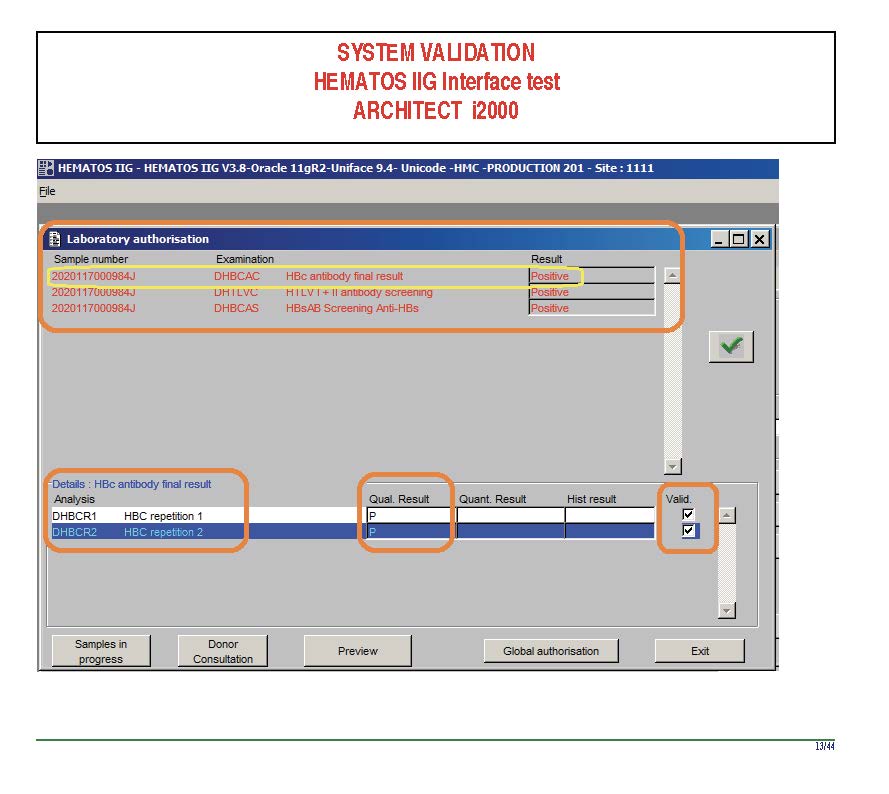

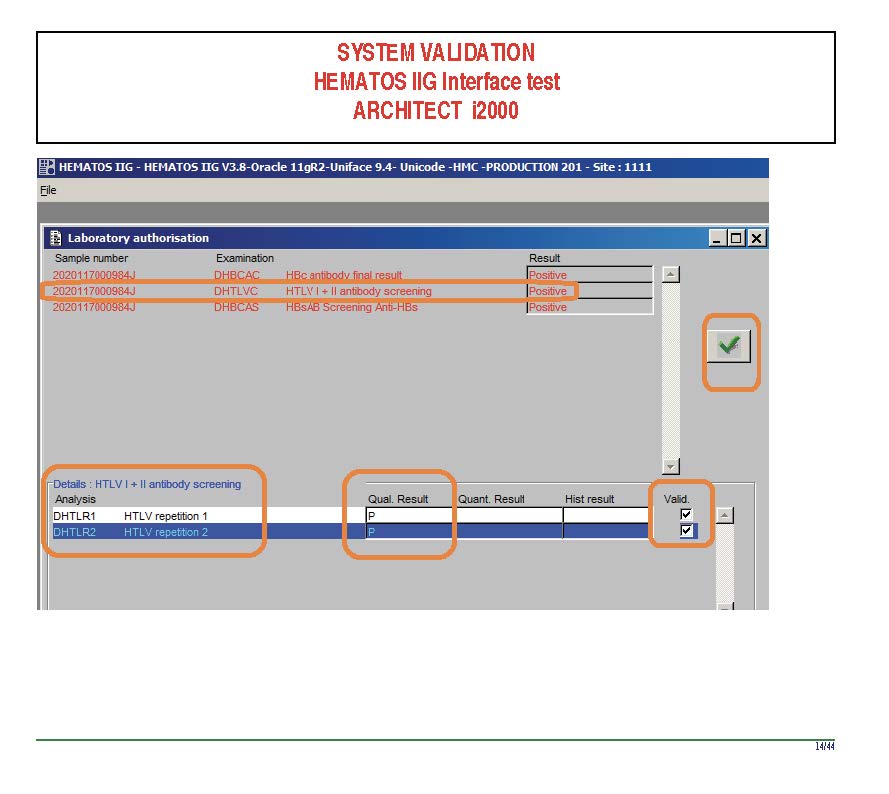

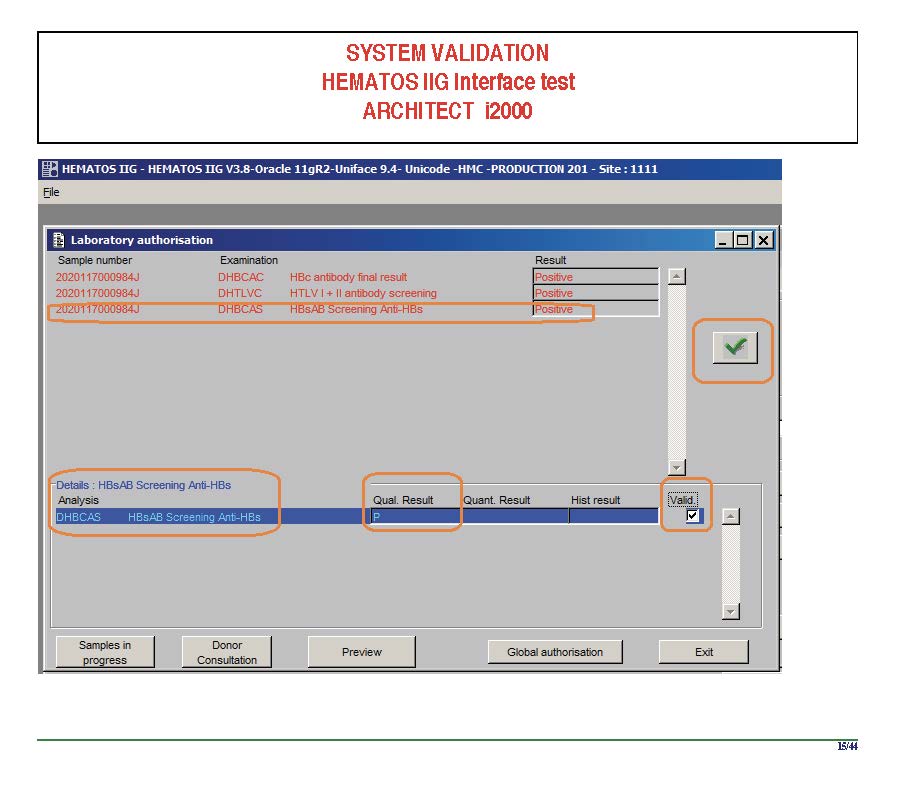

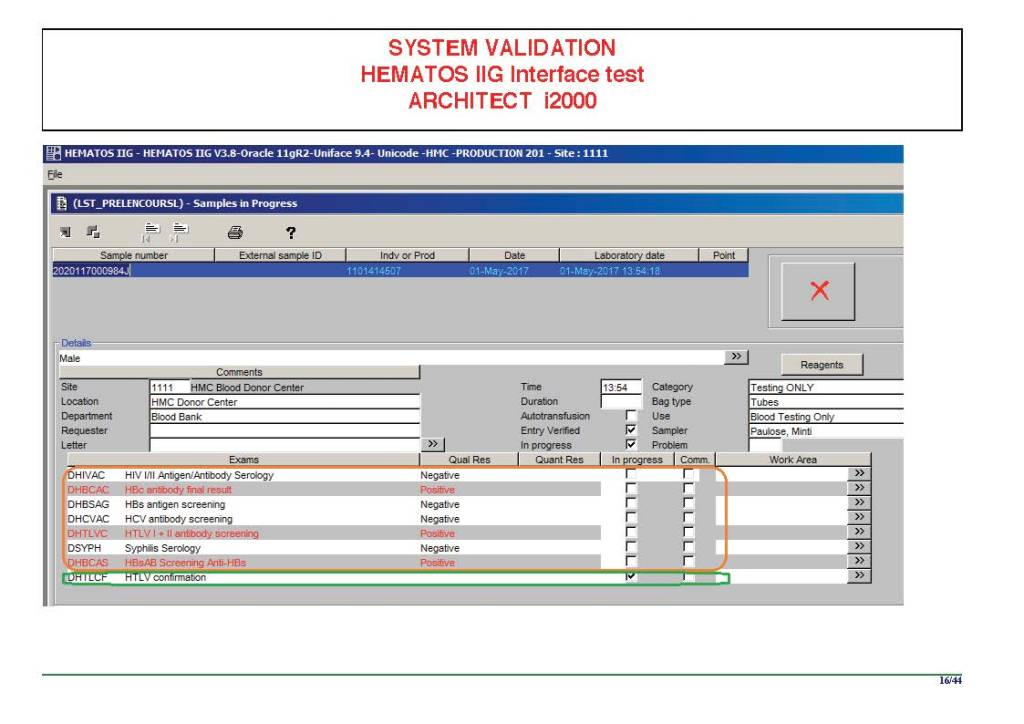

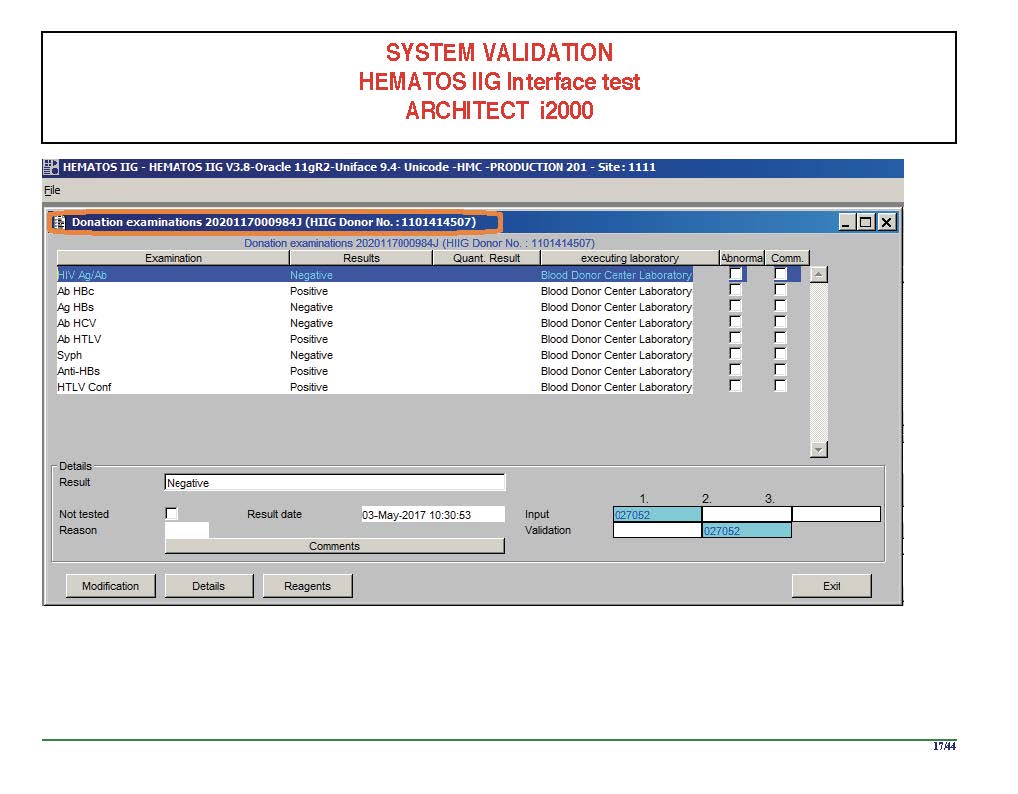

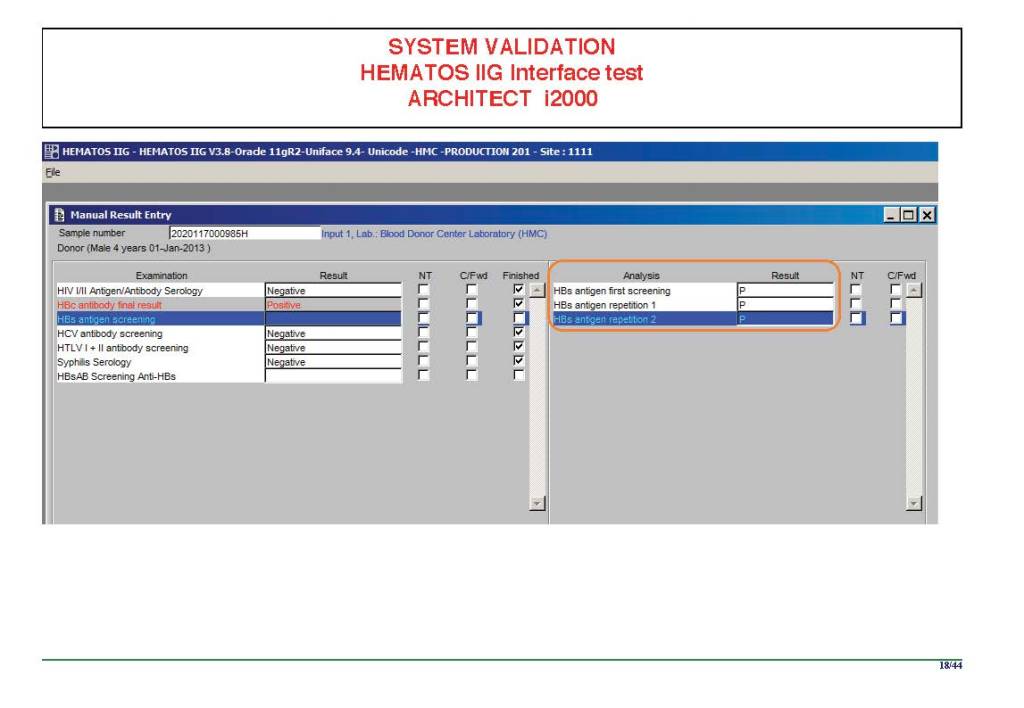

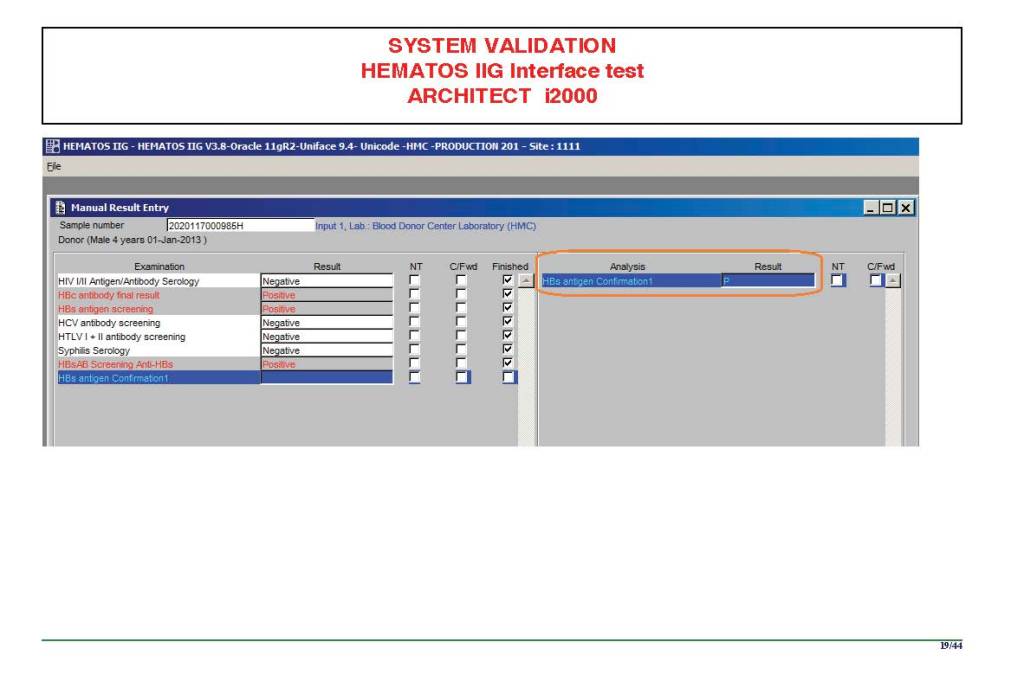

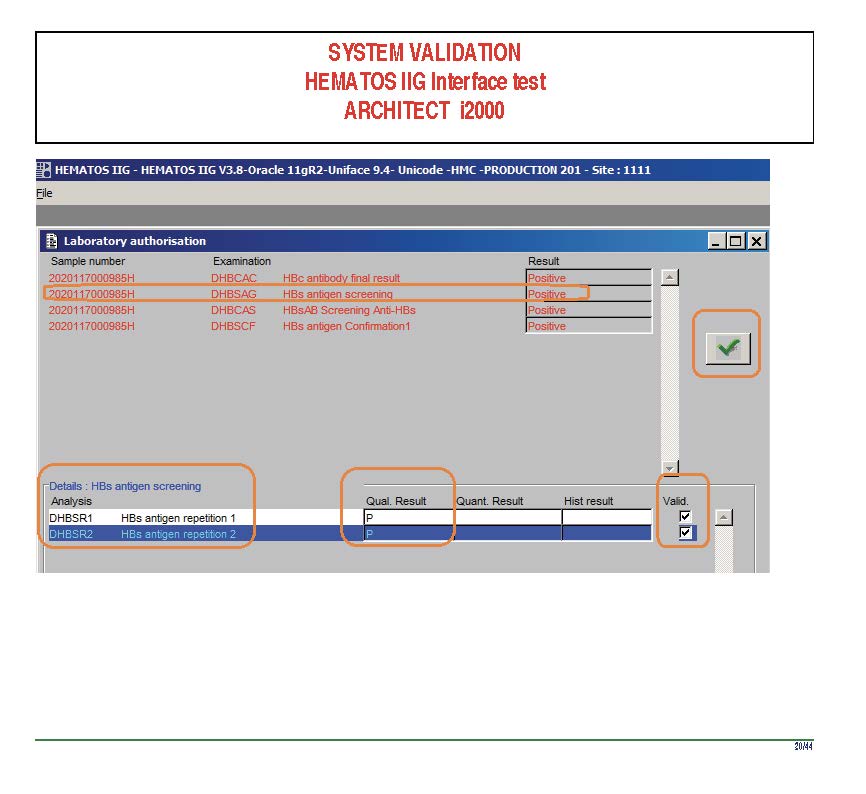

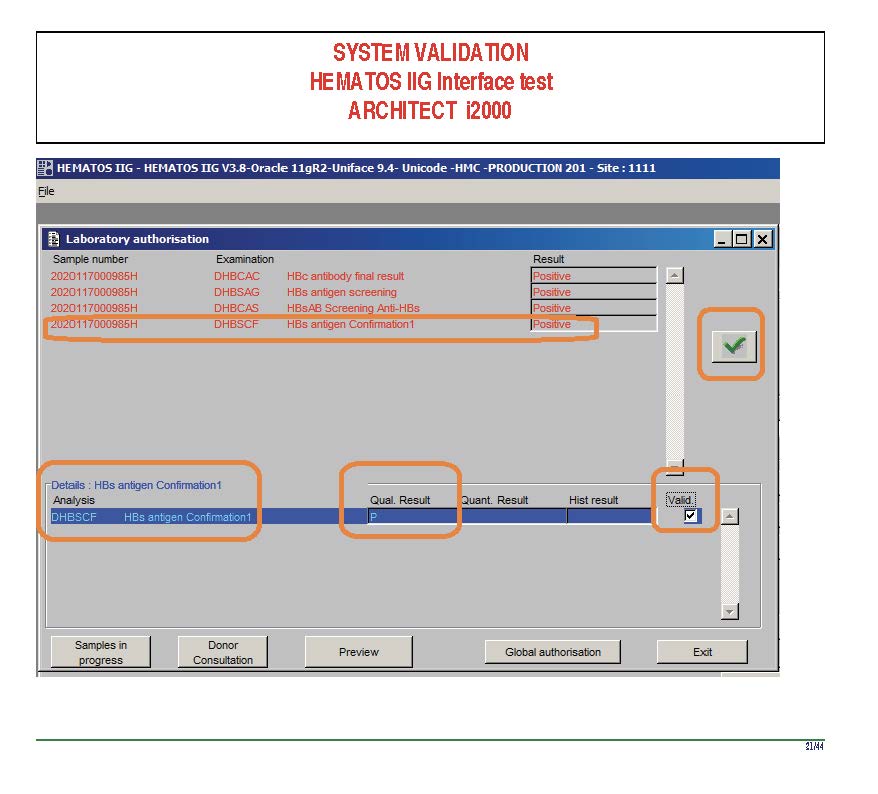

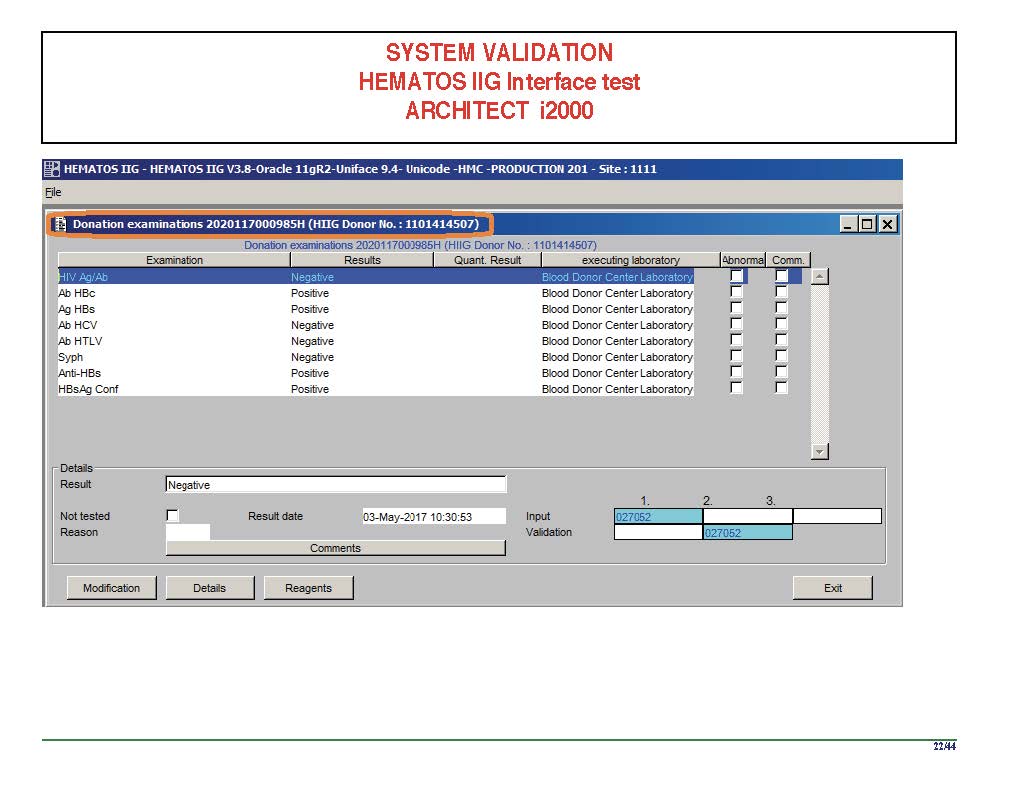

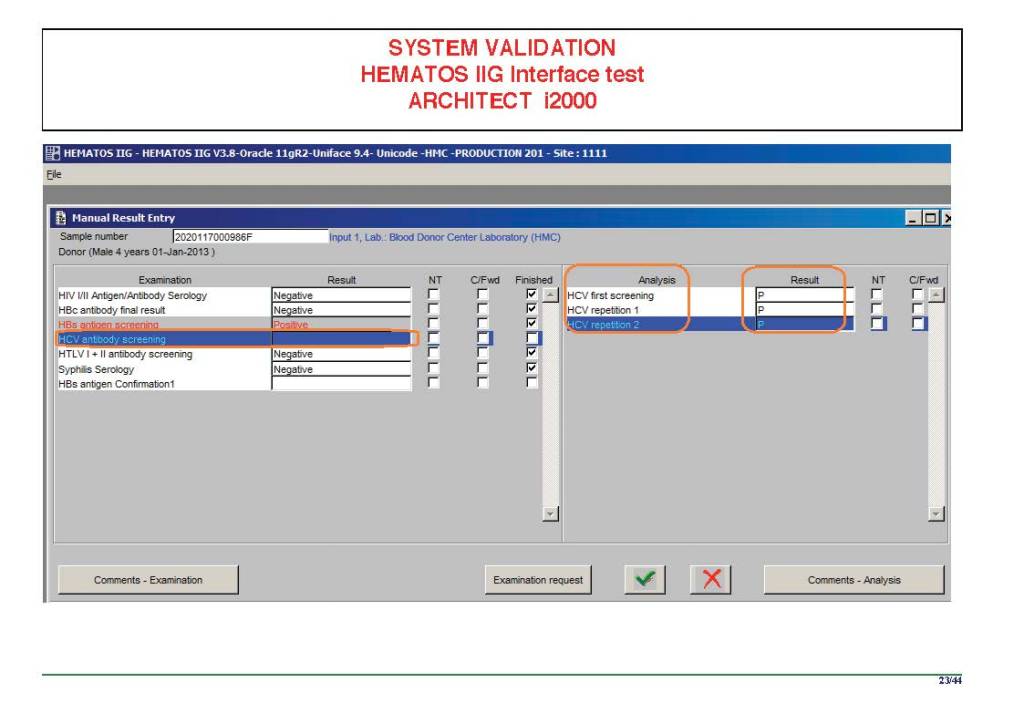

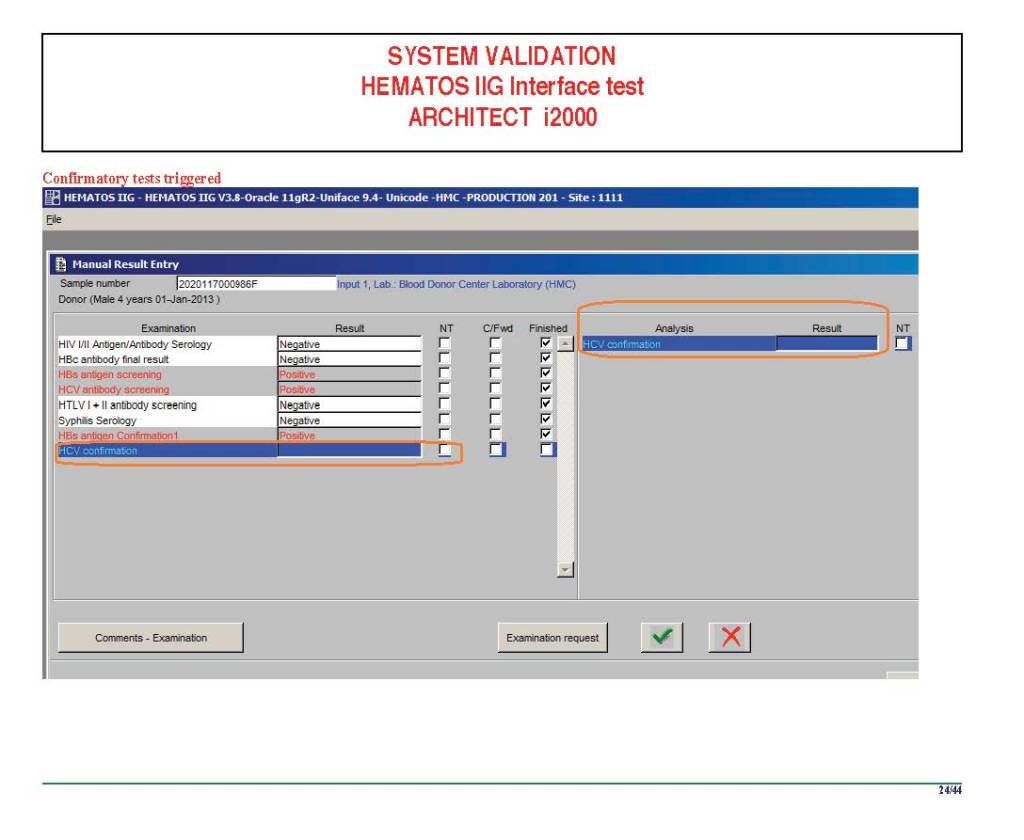

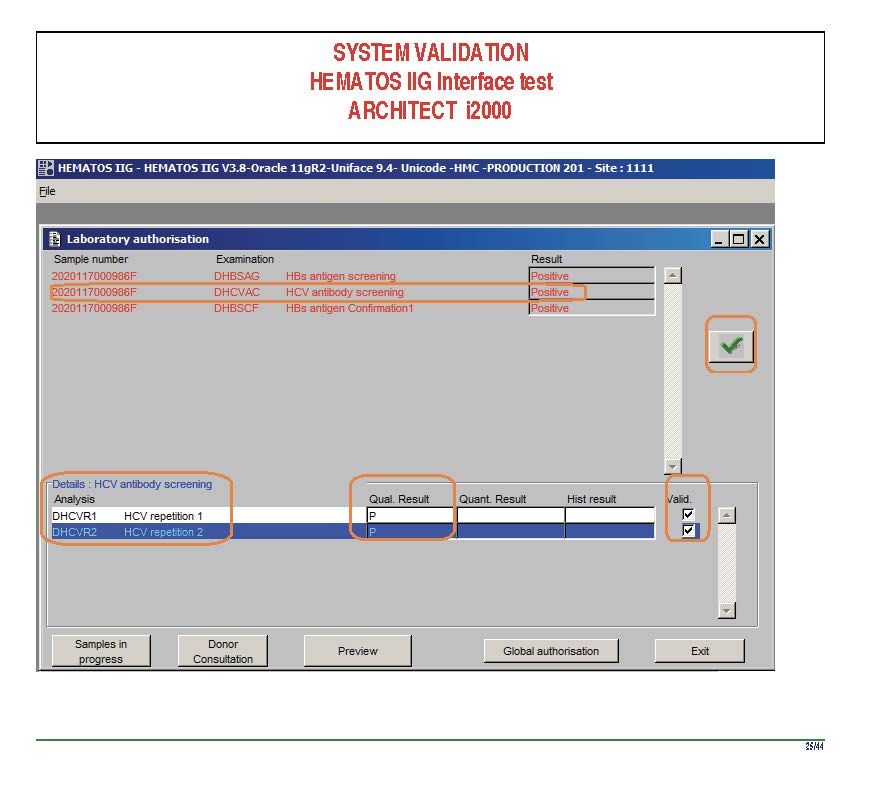

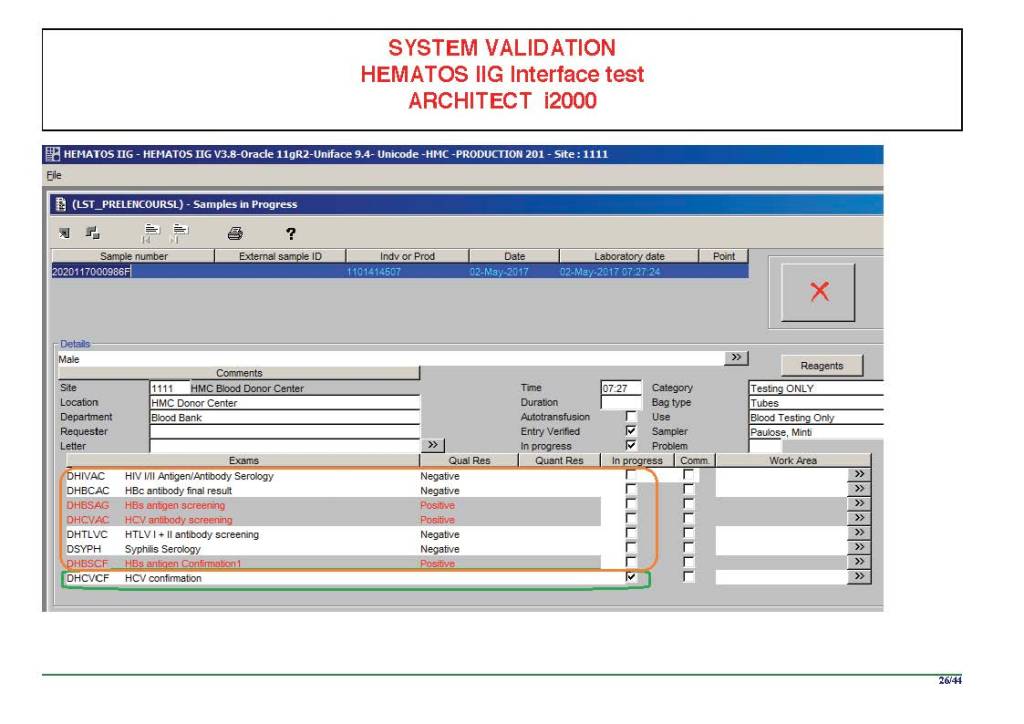

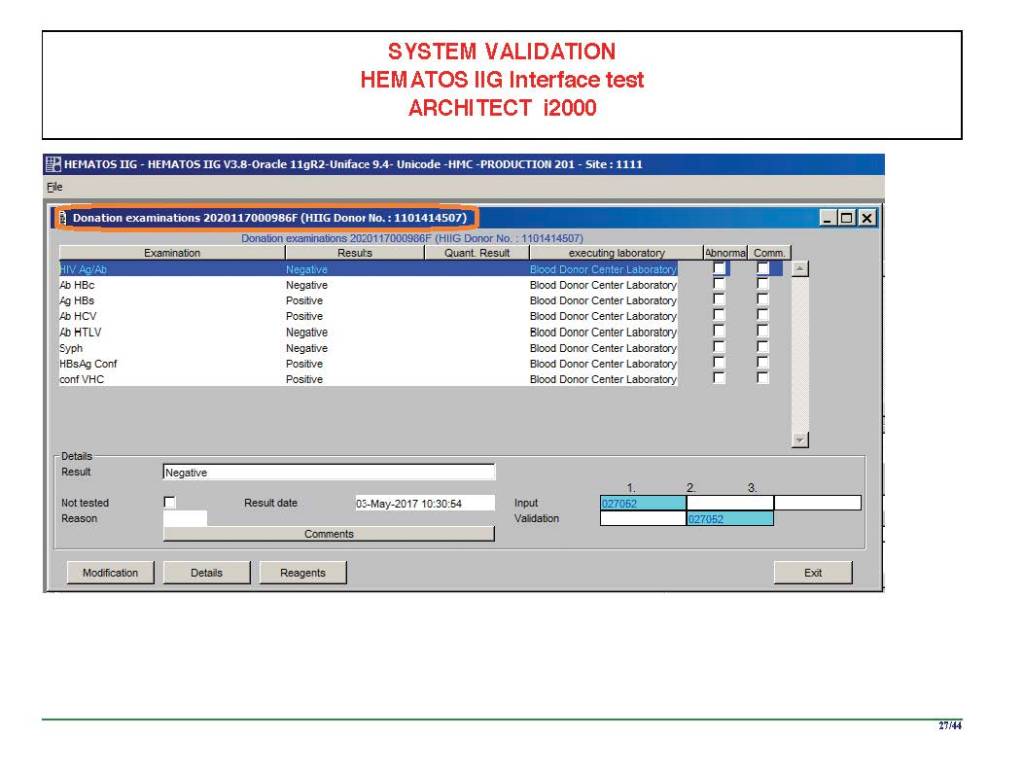

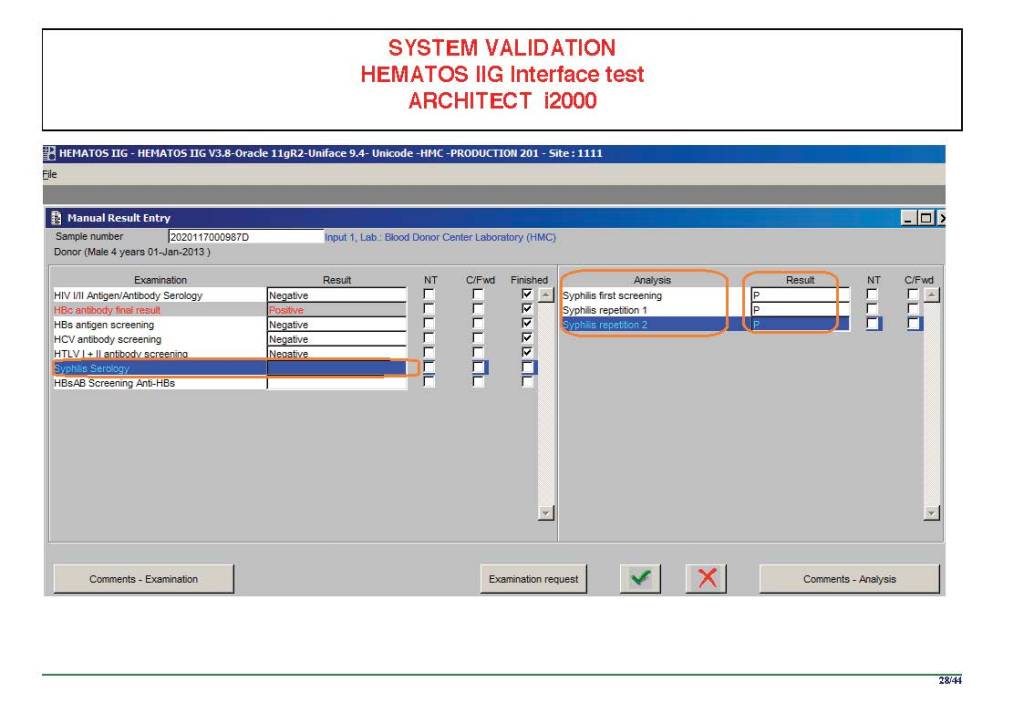

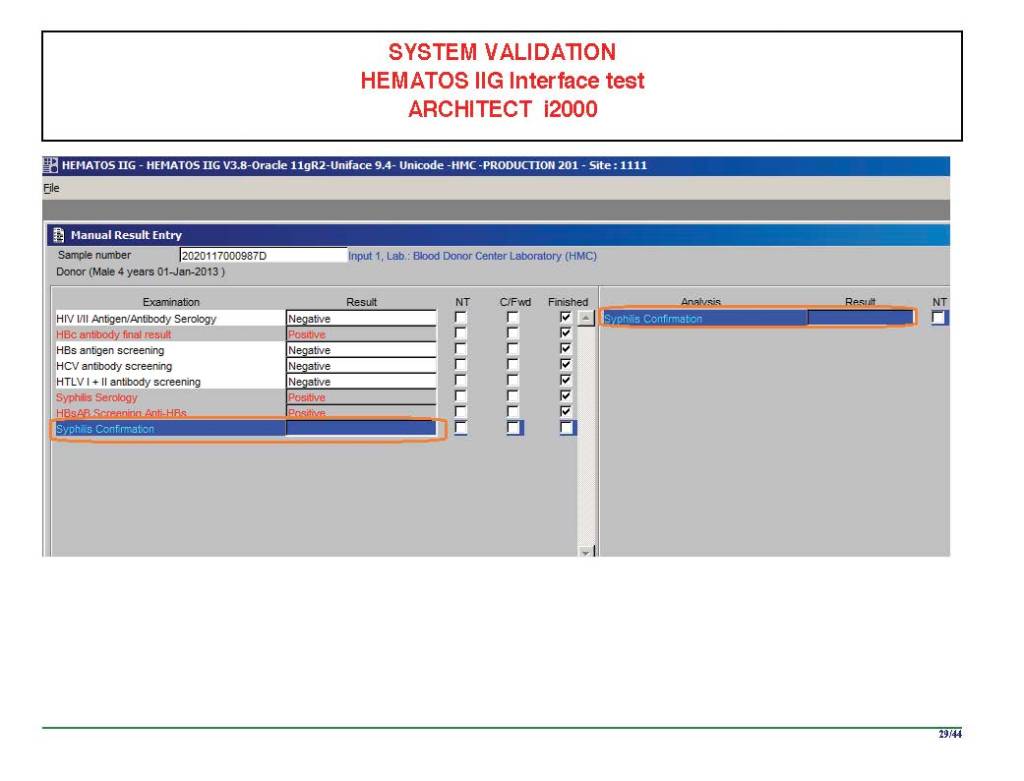

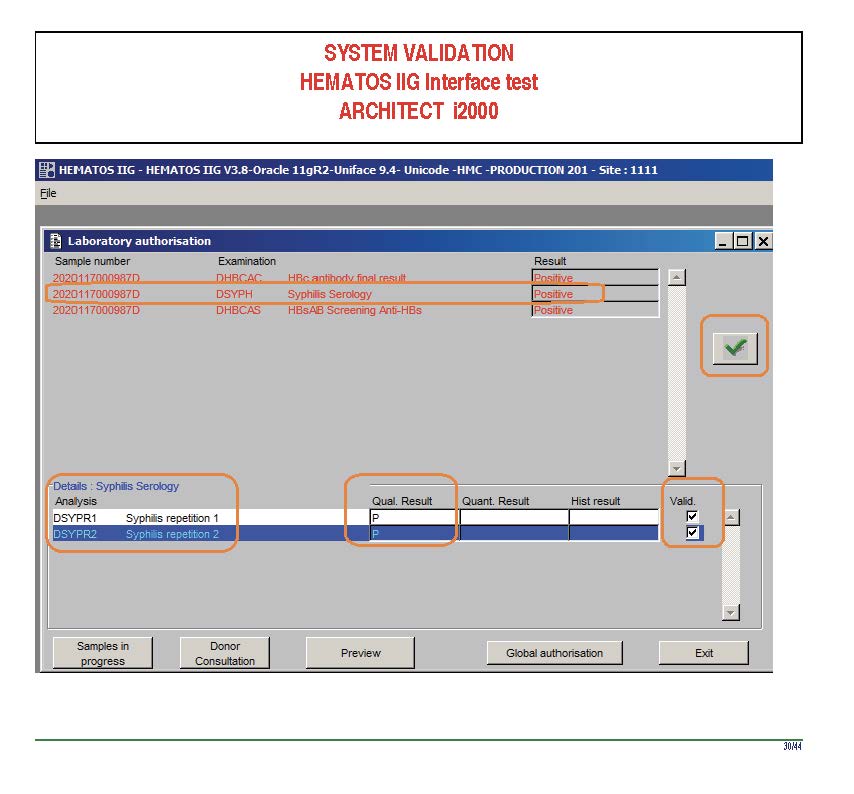

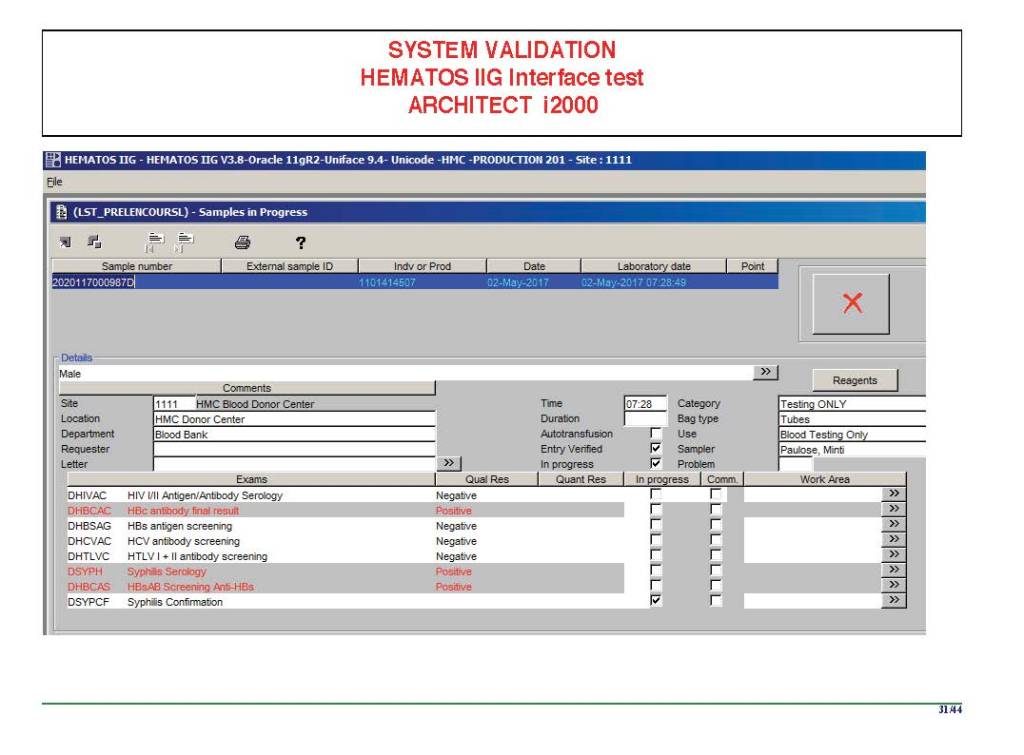

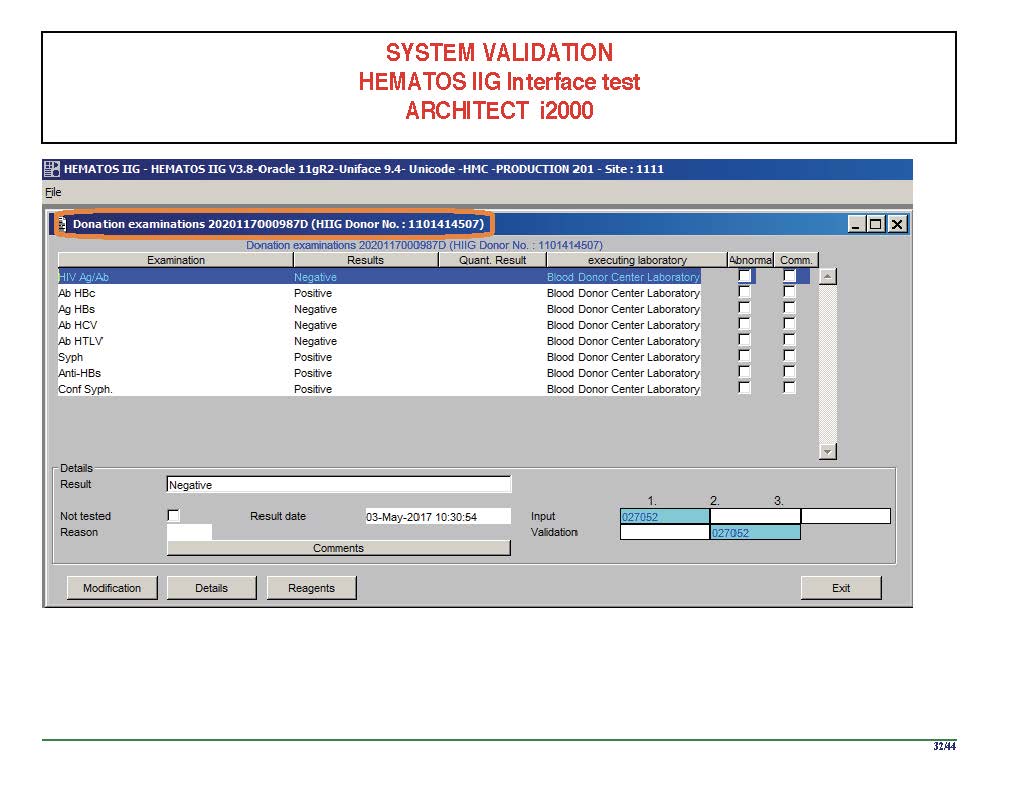

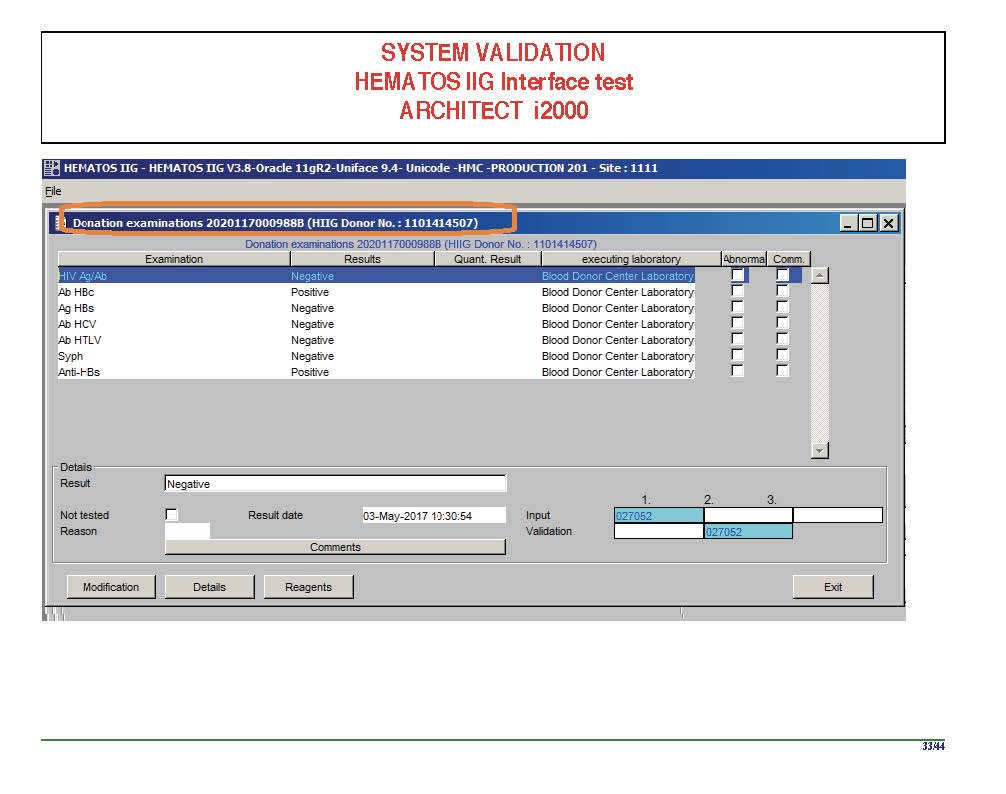

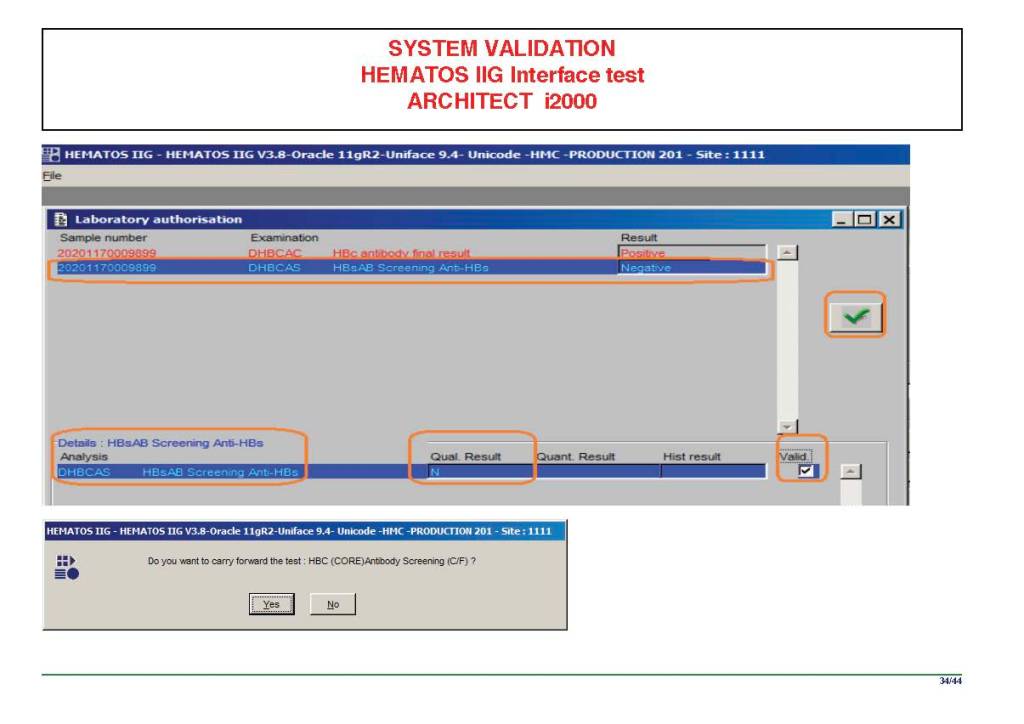

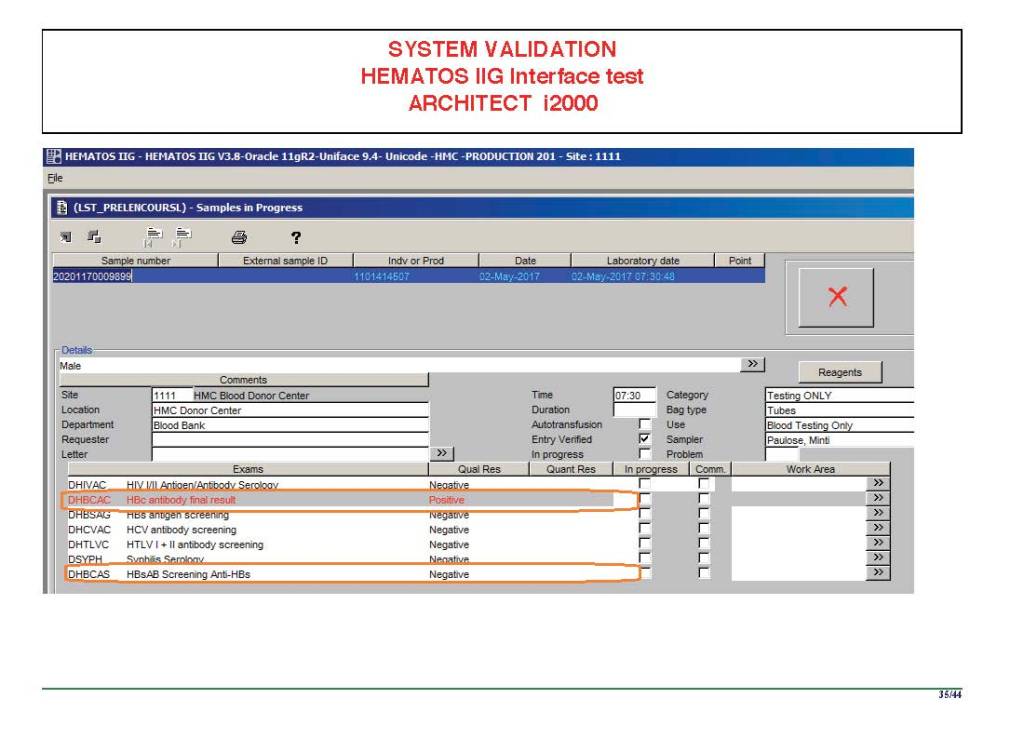

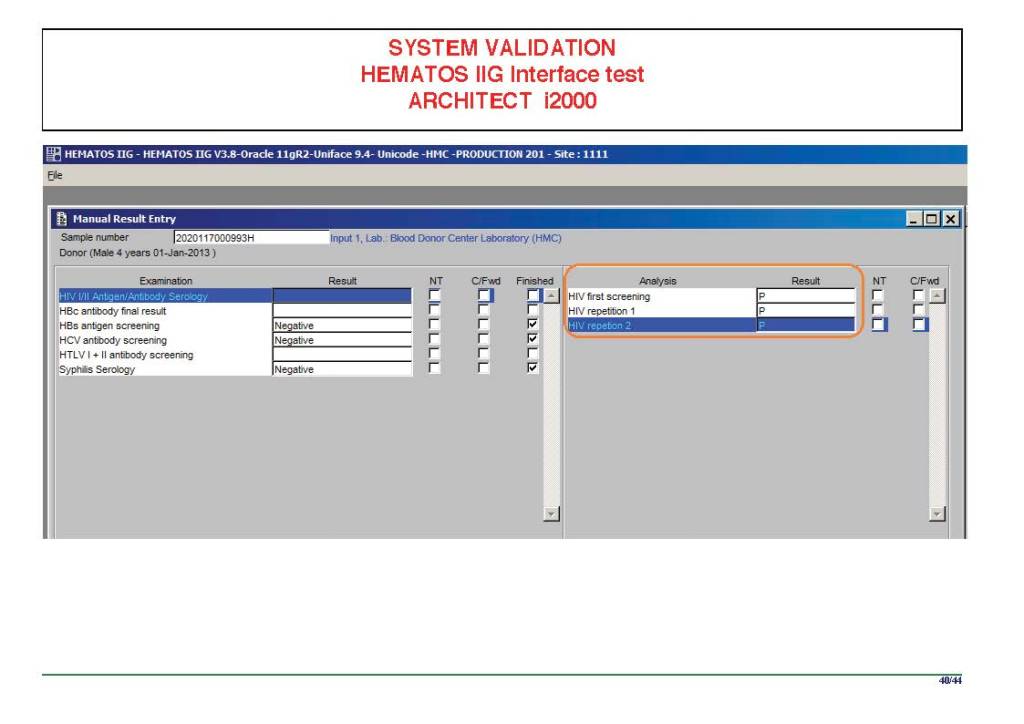

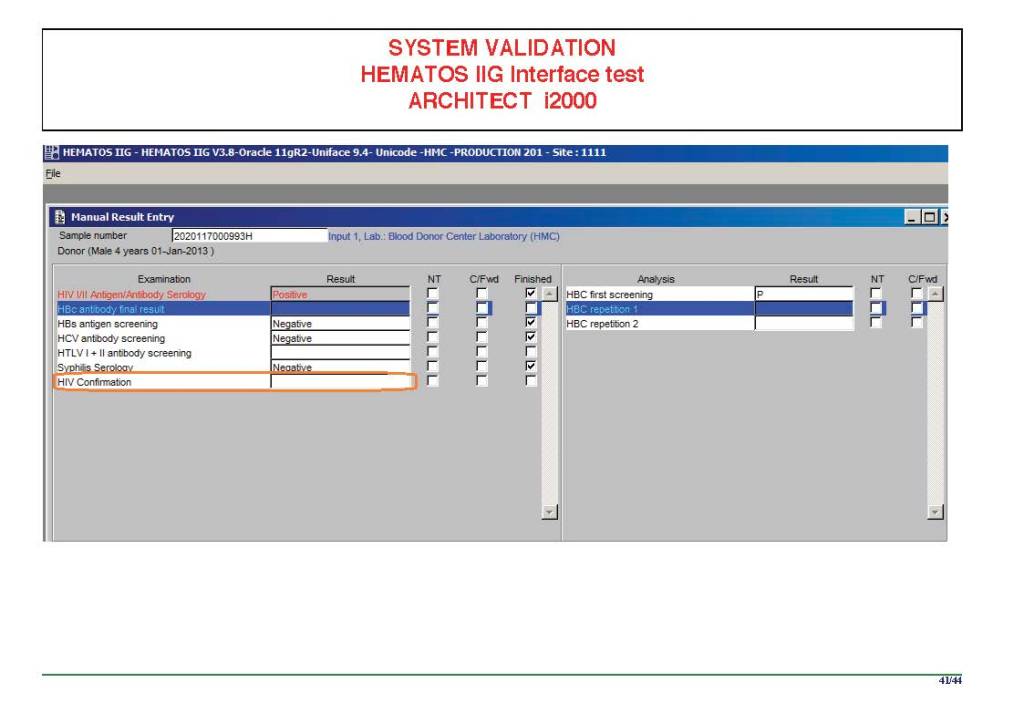

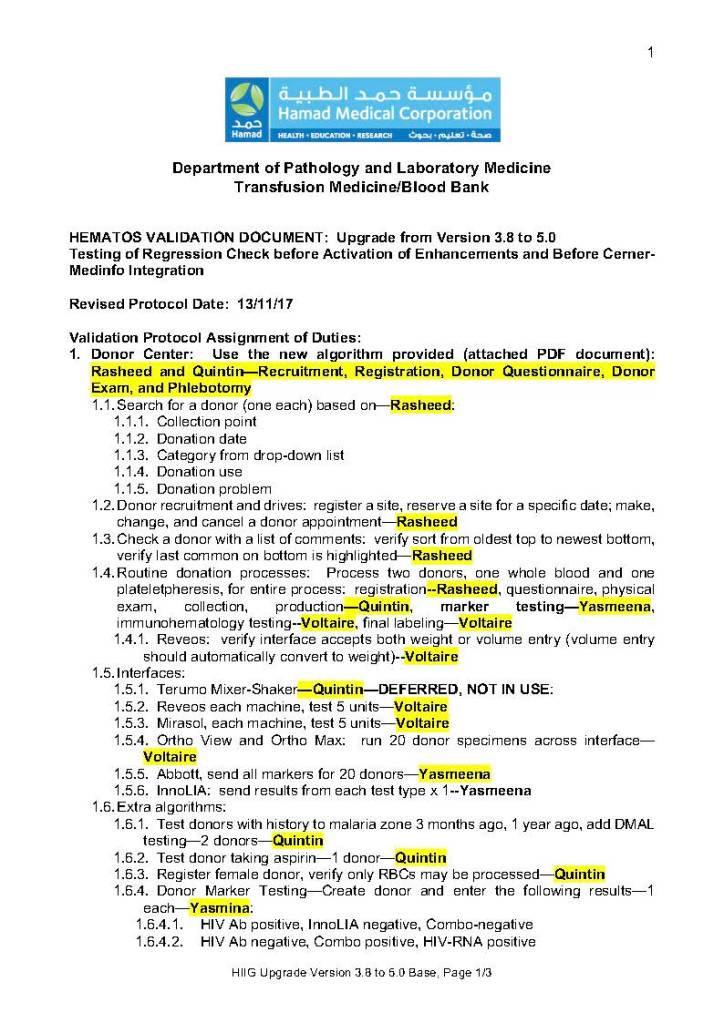

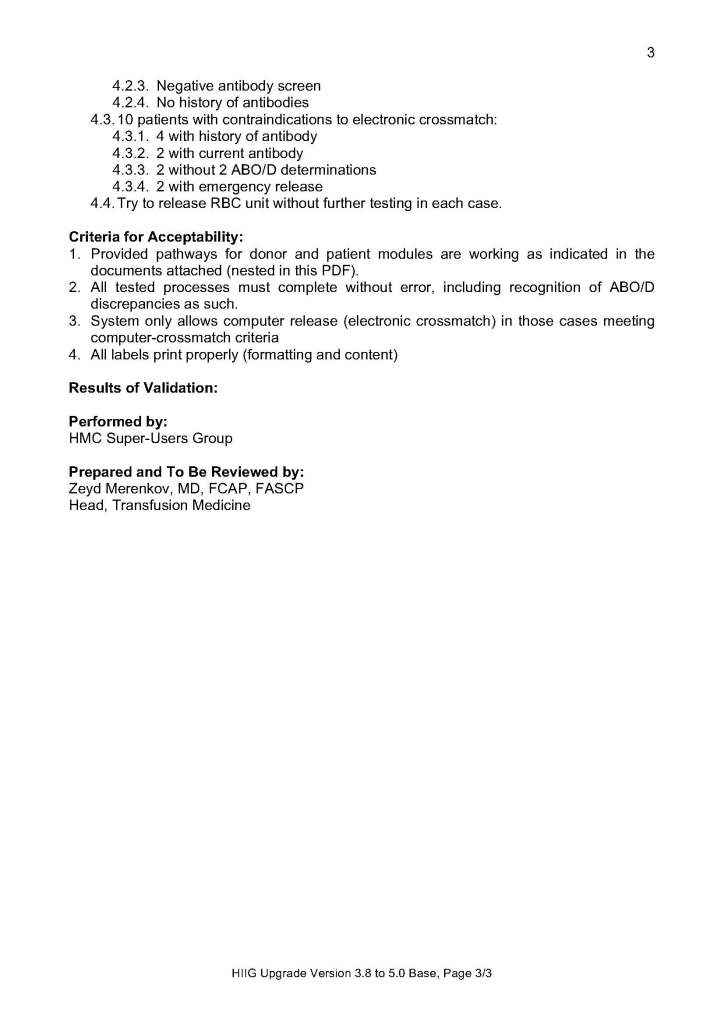

When I was at HMC which included many hospital blood banks, we standardizes our methodologies/processes as much as possible, but we still had some differences based on the equipment at each site. When we built the blood bank computer system, we had to build a specific process for each test, taking into account the methodology and the type of reagents used. We used the manufacturer’s recommendations when establishing the criteria for each test.

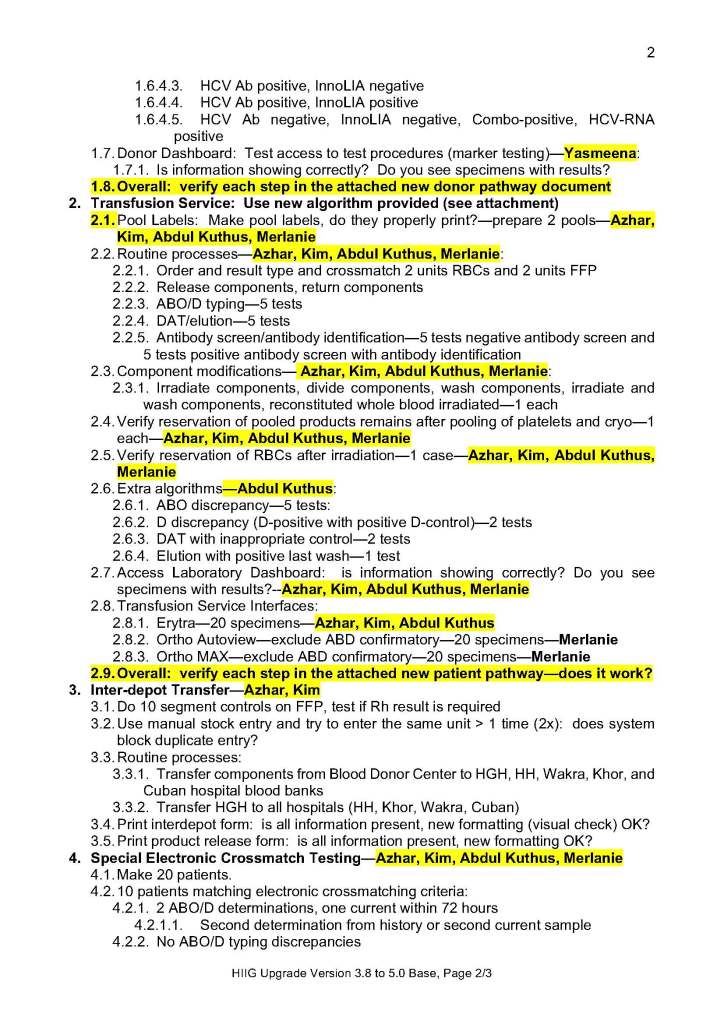

There were manual and multiple automated tests, e.g. for ABO/D typing. Rules were established when automated release was allowed and when a manual review was necessary. Complicated cases were referred to the transfusion medicine physician for review and comment.

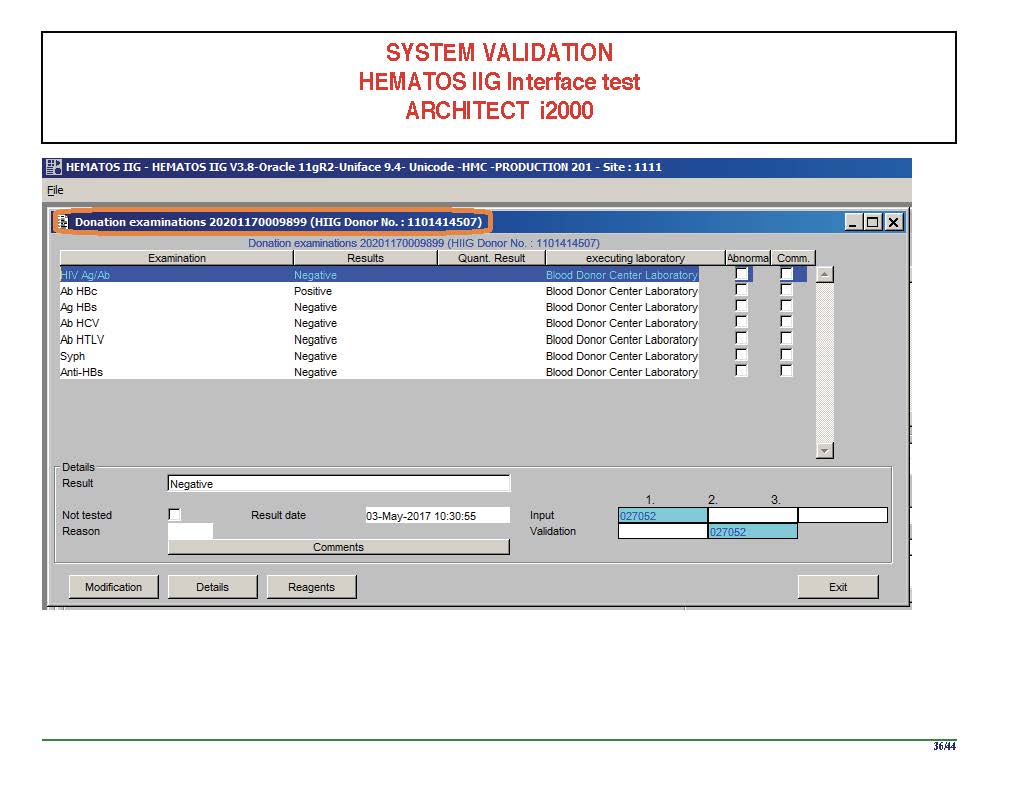

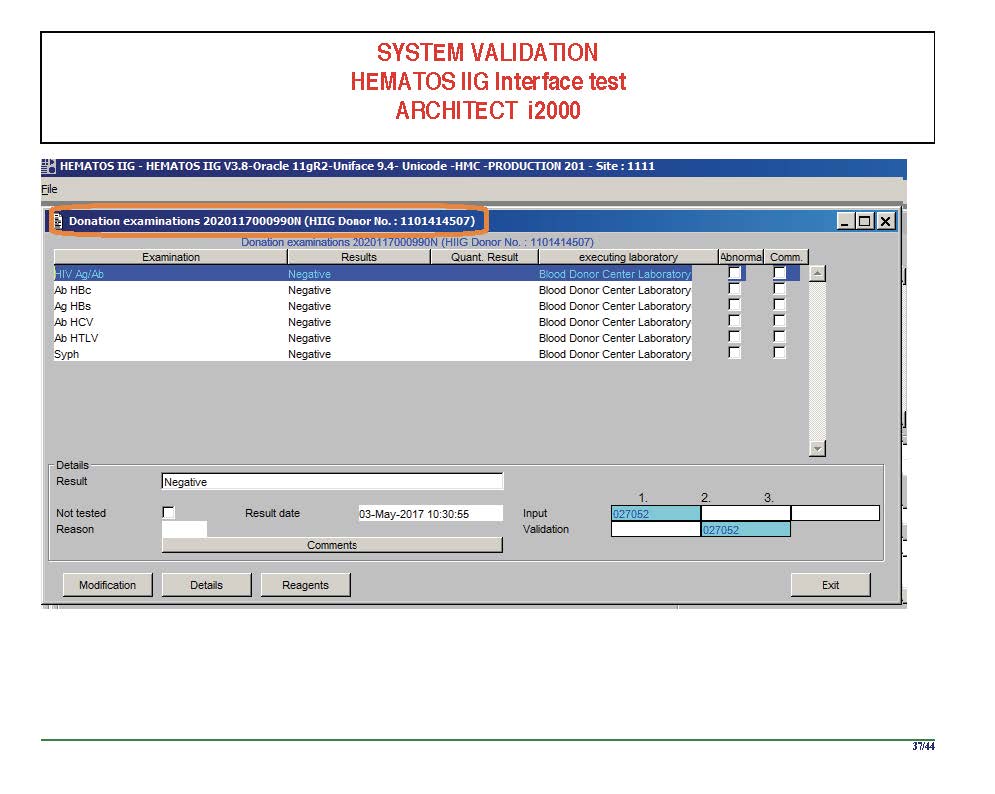

In our system, all tests could be ordered and performed from all sites. Transfusion medicine physicians could review all work from all sites. For technologists, they were restricted to the sites they worked or supervised except to review results.

All patient results across the entire system from the current and previous system were available and could be used to make/enforce rules.

In general, certain categories of results were referred to the transfusion medicine physician for review, but any test could reviewed by him/her, especially if a clinician requested it. Everything was documented in the software.

Component modification (thawing, aliquoting, irradiating, pooling, washing) processes were the same at all sites AND the blood donor center. Each modification changed the ISBT designation of the component, a new ISBT label was printed, and the outdate of the components were updated.

Antibody workups were still performed manually, but direct antiglobulin tests could be manual or automated. In each case, review with an interpretative comment was made by the transfusion medicine physicians and might include recommendations for selection and use of components. Rules enforced by the software could be made to enforce these recommendations.

To Be Continued:

1/7/20